Suppose you have multiple virtual machines that you would like to distribute load across that are housed inside of your virtual environment. How do we go about setting up Network Load Balancing so that it will still work with things like DRS and VMotion?

Switch Refresher

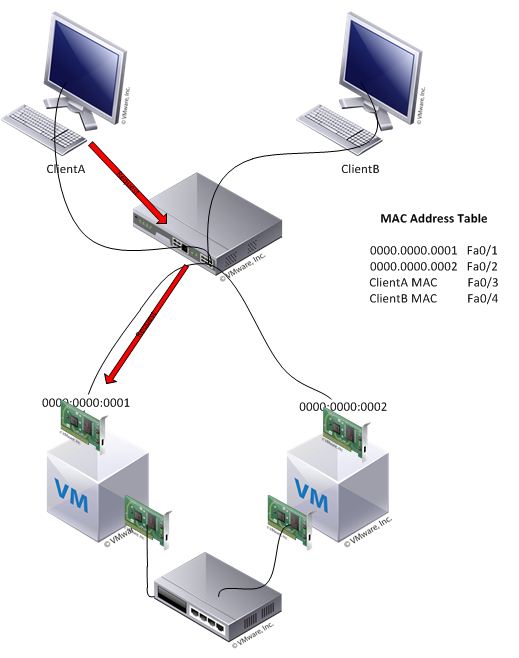

In most networks we have switches that listen for MAC addresses and store them in their MAC Address Table for future use. If a switch receives a request and it knows which port the destination MAC address is associated with, it will forward that request out the single port. If a switch doesn’t know which port a MAC Address is associated with, it will basically send that frame out all of it’s ports (known as flooding) so that the destination can hopefully still receive it. This is why we’ve moved away from hubs and moved towards switches. Hubs will flood everything because they don’t keep track of the MAC Addresses. You can see how this extra traffic on the network is unwanted.

So in the below example, if ClientA sends a request to the MAC Address 0000.0000.0001 the frame will get to the switch and then go out port Fa0/1 and that’s all.

Unicast Mode

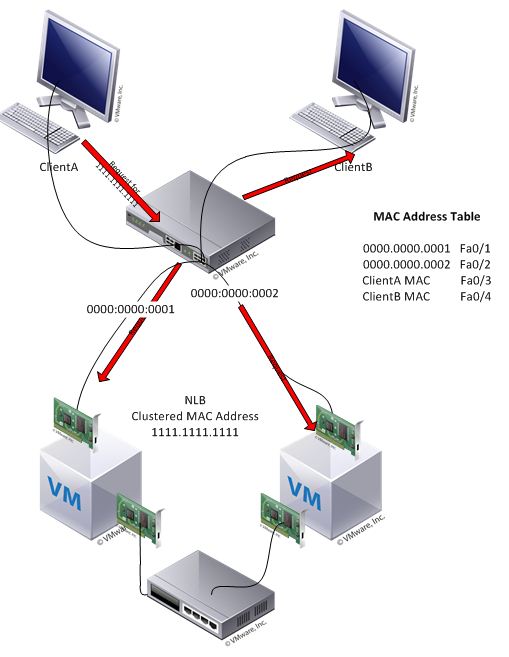

Lets assume that the two VMs are now in a Microsoft NLB Cluster using Unicast Mode. The two VMs now have a shared Cluster MAC Address. Essentially they can now handle any requests sent to their Clustered MAC Address. Microsoft by default uses a feature called MaskSourceMAC so that each of the VMs will send unique MAC Addresses to the switch. This is because many switches require that a unique source address is used. One of the consequences of this is that it keeps the switch from ever learning the Clustered MAC Address. Lets look at another example where Client A is attempting to connect to the NLB Cluster with a MAC Address of 1111.1111.1111.

As you can see, ClientA sends the request to the Clusterd MAC Address, but that MAC Address is not in the switches MAC Address Table. The switch acts as it should and floods the frame and the NLB Cluster will receive the request, but so did ClientB. ClientB will drop the frame because it’s not the intended destination, but this is unnecessary network traffic. You can see how this could be an issue on larger networks.

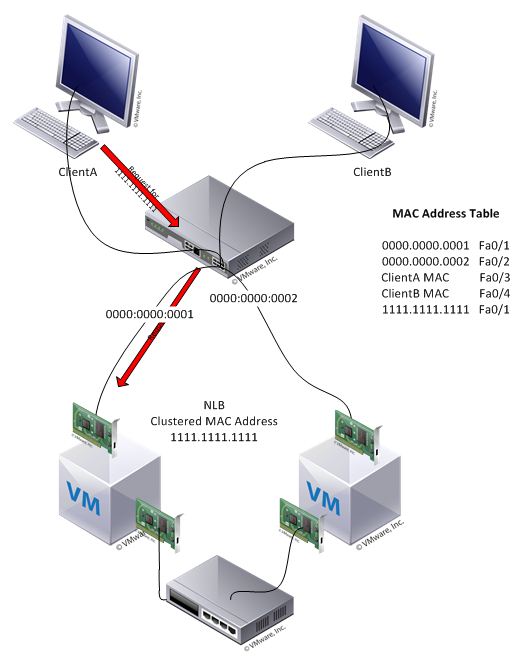

There is a bigger issue though when we’re looking at VMware. According to the VMware Knowledgebase article http://kb.vmware.com/selfservice/microsites/search.do?language=en_US&cmd=displayKC&externalId=1556 ESX hosts will send RARP packets during certain operations such as VMotion. So in this case if one of our VMs was VMotioned to a different ESXi host, the ESXi server would actually notify the physical switch and give it the MAC Address of the NLB Clustered IP Address. Lets look at what happens once the switch enters the MAC Address for the Cluster.

Now, ClientA sends the same request to 1111.1111.1111 and gets a response from only 1 of the VMs. This might make the network traffic more efficient, but also means that the second VM in the NLB Cluster will never receive any more requests and NLB isn’t doing what we want anymore. VMware’s solution for this is to change the vSwitch or PortGroup properties so that “Notify Switches” is off.

Multicast Mode

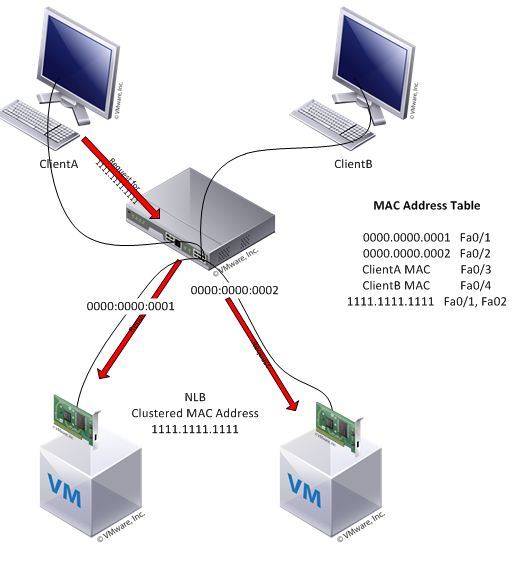

Multicast mode works a bit differently and is the recommended solution from VMware for using NLB. The main difference is that in Multicast mode the NICs can still communicate using their original MAC Addresses. They will still have their original MAC Address as well as the cluster MAC Address. Since they can communicate over both the physical and the cluster addresses their is no need for a secondary network card for communication as there is in Unicast mode.

When a multicast mode VM sends requests to the network now, the switch will see the physical address of the VM and not the clustered address. The switch will then add that address to it’s MAC address table as usual. The switch will likely drop the arp replies because the server has a Unicast IP address with a Multicast MAC address. In order for NLB to work correctly, the MAC address of the Cluster needs to be manually entered into the switch so that the switch will forward the frames properly. It is detailed in the following VMware KB 1006525.

Here we can see that if ClientA makes a request for the address 1111.1111.1111 that it will be properly sent to the correct hosts.

The important thing to remember is to add the ARP and MAC addresses statically to the physical switch in order to forward the frames correctly. Assuming my above example uses a Cisco switch I would have run something like the following:

conf t

arp 10.10.10.10 1111.1111.1111 ARPA

mac-address-table static 1111.1111.1111 vlan 100 int fa0/1 fa0/2

Once the manual arp resolution and static MAC resolution is complete on the switch, the vSwitch Teaming policy should be set to Notify Switches –>Yes.