I’ve never paid too much attention to the different types of RAM (Random Access Memory) during my tenure as a Systems Engineer but wonder how much time it would have saved me in troubleshooting. This post is not only an attempt to educate other technicians, but an opportunity to refine my own knowledge of the subject.

I’ve never paid too much attention to the different types of RAM (Random Access Memory) during my tenure as a Systems Engineer but wonder how much time it would have saved me in troubleshooting. This post is not only an attempt to educate other technicians, but an opportunity to refine my own knowledge of the subject.

There are three main categories that I want to review. Ecc vs Non-ECC, Unbuffered vs Registered memory, and Memory Rank.

ECC vs Non-ECC Memory

ECC stands for Error Correcting Code and for good reason. ECC uses parity by using an XOR function much like you would use RAID. More Information

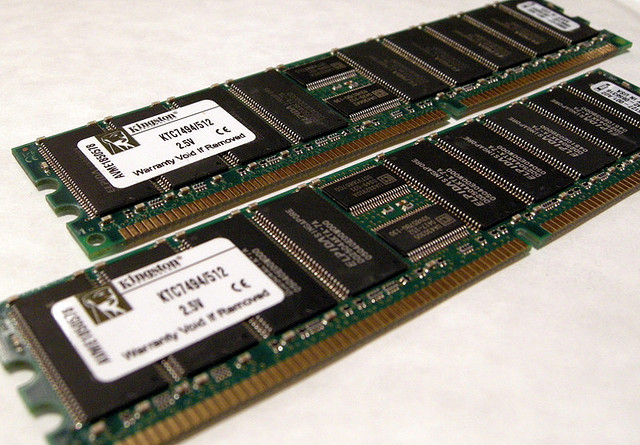

It’s usually easy to spot ECC memory because you can look at the number of chips on the side of a stick of RAM and if you can evenly divide the number of banks by three then it’s usually ECC. The reason for this is RAM manufacturers will add an additional chip to handle the parity information. (Wouldn’t it be nice to get disk manufacturers to do the same!)

ECC RAM with 9 chips

So ECC has error correcting and should be used in all cases right? Well, of course there is still a use for non-ecc memory. Non-ECC memory is usually cheaper and can be useful in desktops. The worst case is an app may crash or a desktop may need restarted. Servers, especially those that are virtualization hosts, should use ECC in order to prevent application crashes due to memory errors.

ECC also has a 2% performance impact due to the parity checks. This is a small price for stability.

Unbuffered vs Registered ECC Memory

These days, the memory controller is built into the CPU. This means that the CPU needs to manage the memory and there are a couple of ways this can happen.

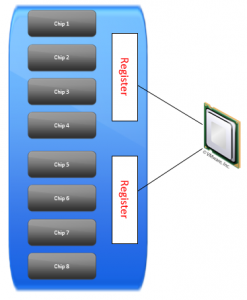

Unbuffered memory requires that the CPU manage all of the chips, as shown in the picture below.

Registered memory helps to take some of the load off the CPU by putting the information into the registers.

unbuffered memory configs go directly from the controller to the memory module.

The main benefit of using registered memory is that there is less for the CPU to manage and it allows for larger amounts of RAM to be put into a computer.

The downside is that Registered memory is a bit slower because it takes an additional clock cycle to retrieve information for the processor to execute.

Memory Rank

Memory Rank refers to the number of 64-bit data blocks. (72-bit data blocks if the memory is ECC) If two 64-bit data blocks are on the same chip select [bus] they would be considered dual rank.

Ranks are a good way for manufacturers to jam more memory into a system, but these ranks will slow down the memory in doing so. For this reason, single rank memory is usually the fastest.

CAS Latency

You’ve probably heard about CAS latency as well, but maybe didn’t realize what it was. I doubt this was a problem because as the name suggests, the lower the amount of latency the better.

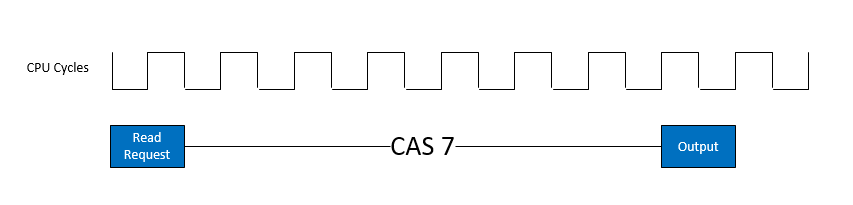

CAS latency stands for Column Address Strobe Latency. What’s important to know is that it’s the amount of time between when a request is made and when the data is output.

In the example below a read request is made of memory and it takes seven clock cycles for the data to be retrieved. To put numbers into perspective, CAS latency of 9 would take an extra two clock cycles to complete the same operation, assuming the same speed processors were used.

SDRAM vs DDR

In the CAS Latency example above I showed how read requests are dependent upon clock cycles. The main difference between SDRAM and DDR is that DDR can read on both the uptick and downtick of the clock cycle whereas SDRAM can only read on the uptick. DDR stands for Double Data Rate for this reason.

DDR2 and DDR3 are newer concoctions of the DDR standard and can combine multiple banks of memory to obtain additional bandwidth.