The number of clusters that should be used for a vSphere environment comes up for every vSphere design. The number of clusters that should be used isn’t a standard number and should be evaluated based on several factors.

Number of Hosts

Let’s start with the basics, if the design calls for more virtual machines than can fit into a single cluster, then it’s obvious that multiple clusters must be used. The same is true for a design that calls for more hosts that can fit into a single cluster or any other cluster maximums.

vSphere 6 MaximumsvSphere 5.5 MaximumsHosts per Cluster6432VMs per Cluster80004000

Maybe the design is simple enough that if there is 128 ESXi 6.0 hosts, they are just split up into two 64 host clusters. Designs are rarely this simple and so we’ll look at some other considerations.

Licensing

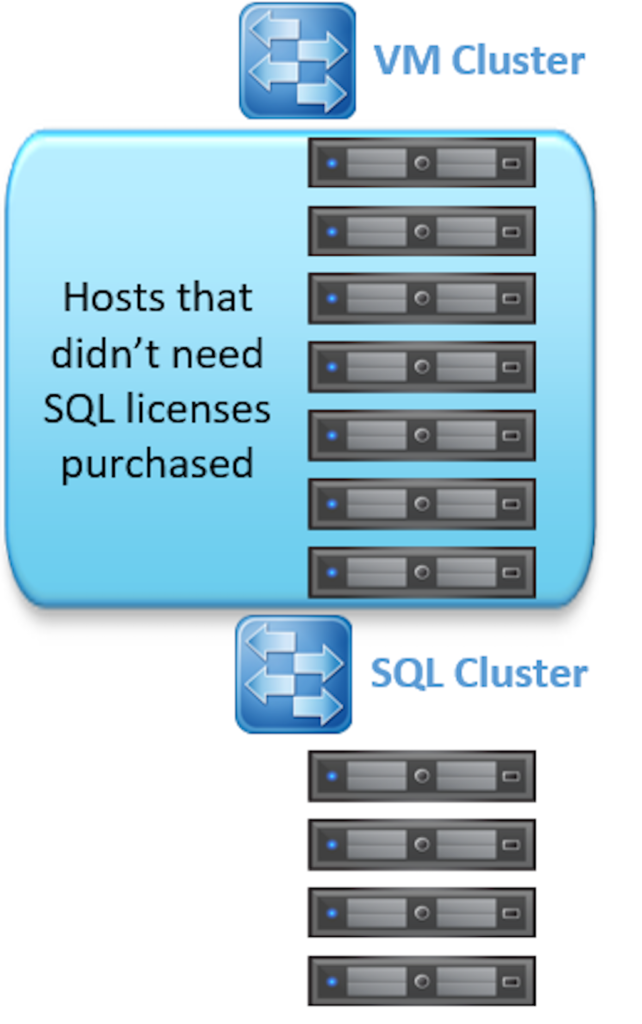

Software vendors have adapted to dealing with virtual infrastructures. Some software vendors even require you to license every host that might house their software, even if the software is in a virtual machine. With Distributed Resource Scheduling (DRS) we’re used to vMotioning workloads between hosts to better utilize our resources, but software licensing can have a serious cost associated with it if you have to license every host in the environment.

If software licensing such as Microsoft SQL Server is used, consider having a SQL cluster where all hosts in only this cluster are licensed, and all of your SQL VMs are placed in this cluster. This can dramatically cut down on licensing costs.

Host Types

Maybe you don’t have the luxury of buying new servers as part of the design and you have to re-use what already exists. This presents a new challenge.

Hosts that have different processor types might want to be placed into a different cluster. If the processors differ by vendor (AMD and Intel) then VMs won’t be able to be vMotioned without powering them off first. This would really hamper things like DRS so it makes sense to have an AMD cluster and an Intel Cluster. If the processors are from the same vendor, you can get away with turning on Enhanced vMotion Compatibility (EVC) which will mask capabilities of newer processors to find the least common instruction set. Depending on the situation, clusters might want to still be arranged by processor types so that that EVC doesn’t have to mask newer features from the latest processors.

Wasted Resources

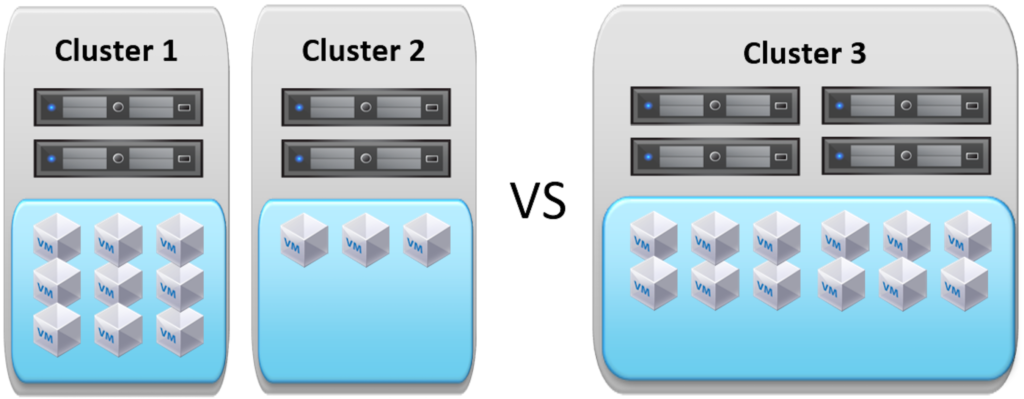

As a general rule, fewer large clusters is better for performance than several smaller clusters. A simple example is demonstrated below. On the left there are four hosts that are split between two clusters. We would start to encounter performance issues if we tried to add another virtual machine into cluster one even though there are plenty of resources in cluster two. If we combine all of the four hosts into a single cluster, like the example on the right, there are plenty of resources left to deploy new workloads. In addition, a single cluster keeps it simple for an administrator deploying a new workload by removing the need for a placement decision.

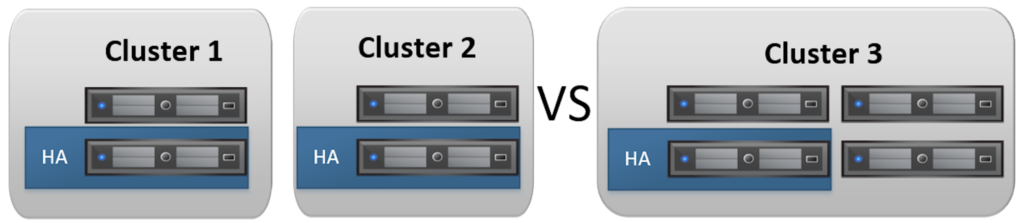

Take a look at the previous example again and look at how many hosts would be needed to protect the virtual machines though VMware HA. Clusters one and two would each need to reserve one host, or 50% of their resources, while Cluster three would only need one host, or 25% of it’s resources.

Management Cluster

It might be a good idea to have one cluster that is dedicated to housing management components. Many times there are components that are responsible for managing the environment that shouldn’t be in the cluster that they manage. A great example of this is NSX. If you set the wrong firewall rule for the cluster, you may inadvertently firewall yourself from the NSX management components and won’t be able to easily fix it. An additional benefit to a management cluster is that they are usually only a couple of hosts so its easy to find a tier 0 virtual machine in the event that vCenter is down.

Physical Location

It’s a no-brainer that slow links between hosts would be a good reason to separate hosts into different clusters. A vMotion over a slow T-1 link would really ruin your day. This could also come into consideration if you’ve got a converged infrastructure and want to keep resources from traversing pods. For example, keeping all of your hosts that connect to the same pair of Cisco Fabric Interconnects in a UCS environment might be a good idea to limit the number of network hops.

Summary

There are a lot of considerations to take into account when picking how many clusters to use. Hopefully this has given you some ideas on how to lay them out for your design.