It’s time to look closer at how we access our containers from outside the Kubernetes cluster. We’ve talked about Services with NodePorts, LoadBalancers, etc., but a better way to handle ingress might be to use an ingress-controller to proxy our requests to the right backend service. This post will take us through how to integrate an ingress-controller into our Kubernetes cluster.

Ingress Controllers - The Theory

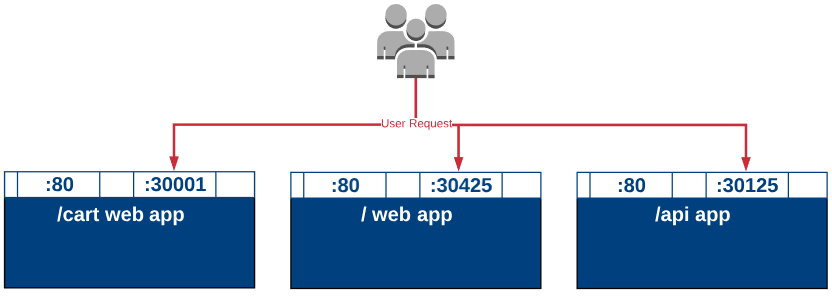

Lets first talk about why we’d want to use an ingress controller in the first place. Take an example web application like you might have for a retail store. That web application might have an index page at “http://store-name.com/" and a shopping cart page at “http://store-name.com/cart" and an api URI at “http://store-name.com/api". We could build all these in a single container, but perhaps each of those becomes their own set of pods so that they can all scale out independently. If the API needs more resources, we can just increase the number of pods and nodes for the api service and leave the / and the /cart services alone. It also allows for multiple groups to work on different parts simultaneously but we’re starting to drift off the point which hopefully you get now.

OK, so assuming we have these different services, if we’re in an on-prem Kubernetes service we’d need to expose each of those services to the external networks. We’ve done this in the past with a node port. The problem is, we can’t expose each app individually and have it work well because each service would need a different port. Imagine your users having to know which ports to use for each part of your application. It would be unusable like the example below.

A much simpler solution would be to have a single point of ingress (you see where I’m going) and have this “ingress-controller” define where the traffic should be routed.

Ingress with a Kubernetes cluster comes in two parts.

- Ingress Controller

- Ingress Resource

The ingress-controller is responsible for doing the routing to the right places and can be thought of like a load balancer. It can route requests to the right place based on a set of rules applied to it. These rules are called an “ingress resource.” If you see ingress as part of a Kubernetes manifest, it’s likely not an ingress controller, but a rule that should be applied on the controller to route new requests. So the ingress controller likely is running in the cluster all the time and when you have new services, you just apply the rule so that the controller knows where to proxy requests for that service.

Ingress Controllers - In Action

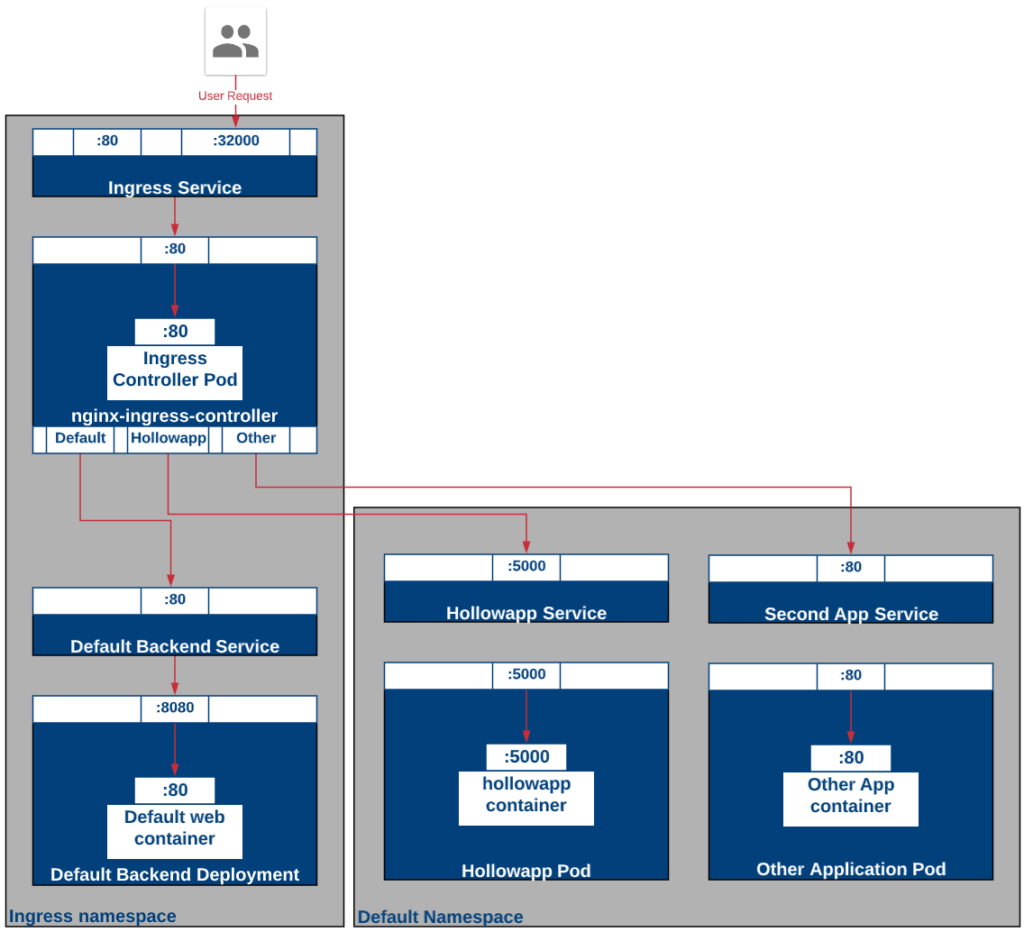

For this scenario we’re going to deploy the objects depicted in this diagram. We’ll have an ingress controller, a default backend, and two apps have different host names. Also notice, that we’ll be using an NGINX ingress controller and that it is setup in a different namespace which helps keep the cluster secure and clean for anyone that shouldn’t need to see that controller deployment.

First, we’ll set the state by deploying our namespace where the ingress controller will live. To do that lets first start with a manifest file for our namespace.

---

apiVersion: v1

kind: Namespace

metadata:

name: ingress

Once you deploy your namespace, we can move on to our configmap that has information about our environment, used by our ingress controller when it starts up.

---

apiVersion: v1

kind: ConfigMap

metadata:

name: nginx-ingress-controller-conf

labels:

app: nginx-ingress-lb

namespace: ingress

data:

enable-vts-status: 'true'

Lastly, before we get to our ingress objects, we need to deploy a service account with permissions to deploy and read information from the Kubernetes cluster. Here is a manifest that can be used.

NOTE: If you’re following this series, you may not know what a service account is yet. For now, think of the service account as an object with permissions attached to it. It’s a way for the Ingress controller to get permissions to interact with the Kubernetes API.

---

apiVersion: v1

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: nginx

namespace: ingress

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: nginx-role

namespace: ingress

rules:

- apiGroups:

- ""

resources:

- configmaps

- endpoints

- nodes

- pods

- secrets

verbs:

- list

- watch

- apiGroups:

- ""

resources:

- nodes

verbs:

- get

- apiGroups:

- ""

resources:

- services

verbs:

- get

- list

- update

- watch

- apiGroups:

- extensions

resources:

- ingresses

verbs:

- get

- list

- watch

- apiGroups:

- ""

resources:

- events

verbs:

- create

- patch

- apiGroups:

- extensions

resources:

- ingresses/status

verbs:

- update

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: nginx-role

namespace: ingress

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: nginx-role

subjects:

- kind: ServiceAccount

name: nginx

namespace: ingress

Now that those prerequisites are deployed, we’ll deploy a default backend for our controller. This is just a pod that will handle any requests where there is an unknown route. If someone accessed our cluster at http://clusternodeandport/ bananas we wouldn’t have a route that handled that so we’d point it to the default backend with a 404 error in it. NGINX has a sample backend that you can use and that code is listed below. Just deploy the manifest to the cluster.

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: default-backend

namespace: ingress

spec:

replicas: 1

selector:

matchLabels:

app: default-backend

template:

metadata:

labels:

app: default-backend

spec:

terminationGracePeriodSeconds: 60

containers:

- name: default-backend

image: gcr.io/google_containers/defaultbackend:1.0

livenessProbe:

httpGet:

path: /healthz

port: 8080

scheme: HTTP

initialDelaySeconds: 30

timeoutSeconds: 5

ports:

- containerPort: 8080

resources:

limits:

cpu: 10m

memory: 20Mi

requests:

cpu: 10m

memory: 20Mi

---

apiVersion: v1

kind: Service

metadata:

name: default-backend

namespace: ingress

spec:

ports:

- port: 80

protocol: TCP

targetPort: 8080

selector:

app: default-backend

kubectl apply -f [manifest file].yml

Now that the backend service and pods are configured, it’s time to deploy the ingress controller through another deployment file. Here is a deployment of the Ingress Controller for NGINX.

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-ingress-controller

namespace: ingress

spec:

replicas: 1

selector:

matchLabels:

app: nginx-ingress-lb

revisionHistoryLimit: 3

template:

metadata:

labels:

app: nginx-ingress-lb

spec:

terminationGracePeriodSeconds: 60

serviceAccount: nginx

containers:

- name: nginx-ingress-controller

image: quay.io/kubernetes-ingress-controller/nginx-ingress-controller:0.9.0

imagePullPolicy: Always

readinessProbe:

httpGet:

path: /healthz

port: 10254

scheme: HTTP

livenessProbe:

httpGet:

path: /healthz

port: 10254

scheme: HTTP

initialDelaySeconds: 10

timeoutSeconds: 5

args:

- /nginx-ingress-controller

- --default-backend-service=$(POD_NAMESPACE)/default-backend

- --configmap=\$(POD_NAMESPACE)/nginx-ingress-controller-conf

- --v=2

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

ports:

- containerPort: 80

- containerPort: 18080

To deploy your manifest, it’s again the:

kubectl apply -f [manifest file].yml

Congratulations, your controller is deployed, lets now deploy a Service that exposes the ingress controller to the outside world and in our case through a NodePort.

---

apiVersion: v1

kind: Service

metadata:

name: nginx

namespace: ingress

spec:

type: NodePort

ports:

- port: 80

name: http

nodePort: 32000

- port: 18080

name: http-mgmt

selector:

app: nginx-ingress-lb

Deploy the Service with:

kubectl apply -f [manifest file].yml

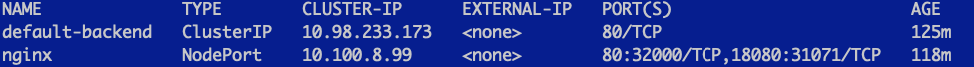

The service just deployed publishes itself on port 32000. This was hard coded into the yaml manifest that was just deployed. If you’d like to check the service to make sure, run:

kubectl get svc --namespace=ingress

As you can see from my screenshot, the outside port for http traffic is 32000. This is because I’ve hard coded the 32000 port as a NodePort into the ingress-svc manifest. Be careful doing this as this is the only service that can use this port. You can remove the nodeport but you will need to lookup the port assigned to this service if you do. Hard coding this port into the manifest was used to simplify these instructions and make things more clear as you follow along.

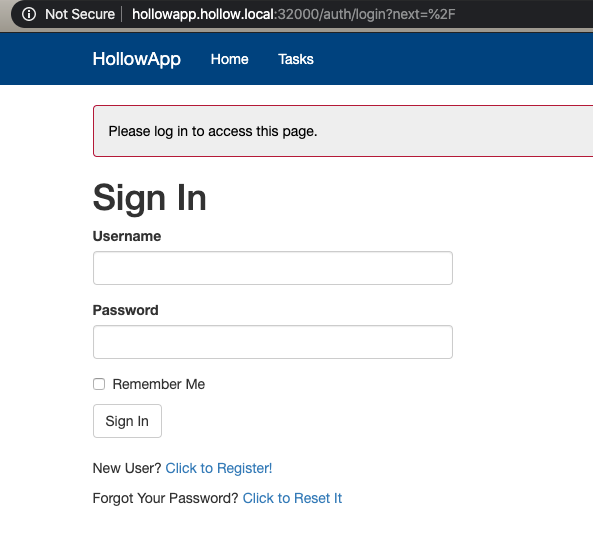

Now we’ll deploy our application called hollowapp. The manifest file below has standard Deployments and a Service (that isn’t exposed externally). It also has a new resource of kind “ingress” which is our ingress rule to be applied on our controller. The main thing is anyone who access the ingress-controller with a host name of hollowapp.hollow.local will route traffic to our service. This means we need to setup a DNS record to point at our Kubernetes cluster for this resource. I’ve done this in my lab and you can change this to meet your own needs in your lab with your own app.

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: hollowapp

name: hollowapp

spec:

replicas: 1

selector:

matchLabels:

app: hollowapp

strategy:

type: Recreate

template:

metadata:

labels:

app: hollowapp

spec:

containers:

- name: hollowapp

image: theithollow/hollowapp-blog:allin1-v2

imagePullPolicy: Always

ports:

- containerPort: 5000

env:

- name: SECRET_KEY

value: "my-secret-key"

---

apiVersion: v1

kind: Service

metadata:

name: hollowapp

labels:

app: hollowapp

spec:

type: ClusterIP

ports:

- port: 5000

protocol: TCP

targetPort: 5000

selector:

app: hollowapp

---

apiVersion: networking.k8s.io/v1beta1

kind: Ingress #ingress resource

metadata:

name: hollowapp

labels:

app: hollowapp

spec:

rules:

- host: hollowapp.hollow.local #only match connections to hollowapp.hollow.local.

http:

paths:

- path: / #root path

backend:

serviceName: hollowapp

servicePort: 5000

Apply the manifest above, or a modified version for your own environment with the command below:

kubectl apply -f [manifest file].yml

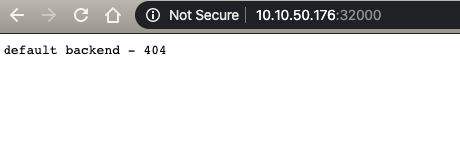

Now, we can test out our deployment in a web browser. First lets make sure that something is being returned when we hit the Kubernetes ingress controller via the web browser and I’ll use the IP Address in the URL.

So that is the default backend providing a 404 error, as it should. We don’t have an ingress rule for access the controller via an IP Address so it used the default backend. Now try that again by using the hostname that we used in the manifest file which in my case was http://hollowapp.hollow.local.

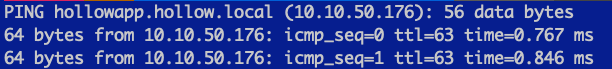

And before we do that here’s a screenshot showing that the host name maps to the EXACT same IP address we used to test the 404 error.

When we access the cluster by the hostname, we get our new application we’ve deployed.

Summary

The examples shown in this post can be augmented by adding a load balancer outside the cluster but you should get a good idea of what an ingress controller can do for you. Once you’ve got it up and running it can provide a single resource to access from outside the cluster and many service running behind it. Other advanced controllers exist as well, so this post should just serve as an example. Ingress controllers can do all sorts of things including handling TLS, monitoring, handling session persistence and others. Feel free to checkout all your ingress options from all sorts of sources including NGINX, Heptio, Traefik and others.