There are a myraid of ways to deploy Kubernetes clusters these days.

Those are just a few of the ways and I’m sure you’ll have a favorite. But for the work I’ve been doing lately, I don’t want to spend a bunch of time cloning repos, updating configs, running ansible scripts and the like, just to get another clean kubernetes cluster in my lab to break. So, I took the individual parts of a Kubernetes build and created a list of ordered jobs in my Jenkins server.

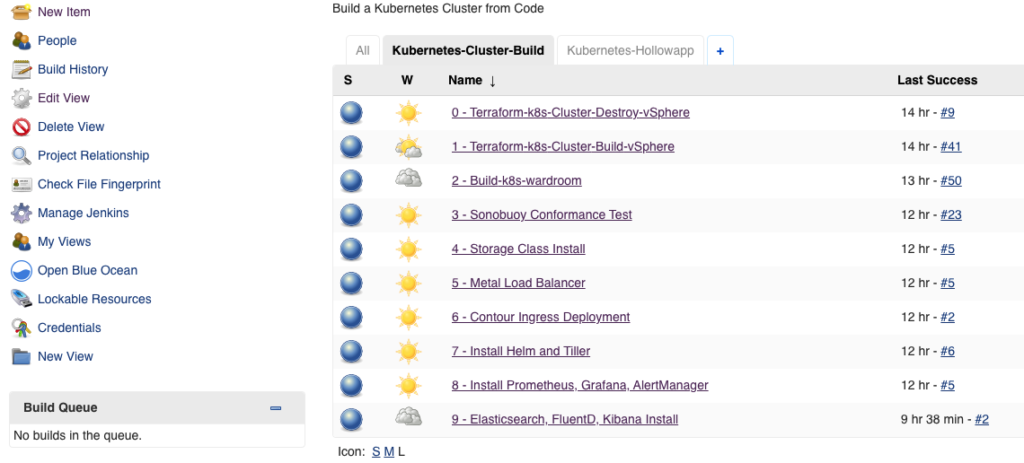

Putting jobs in a Jenkins server is certainly not a new concept, but I thought some folks might find inspiration in a step through list of my Kubernetes items. Here’s what my jobs are doing:

- 0 - Terraform Cluster Destroy - Runs terraform destroy to tear down any previous Kubernetes cluster resources I may have already deployed. This is my “Start Over” button.

- 1 - Terraform k8s cluster Build - Deploy my virtual machines in my vSphere environment complete with control plane and worker nodes. Every time I run this I get fresh VMs for whatever type of k8s install I want to lay down on top of them.

- 2 - Build with Wardroom - This is where I use kubeadm along with ansible from the wardroom project. This sets up Kubernetes on the nodes deployed in the previous step.

- 3 - Sonobuoy Conformance Tests - This job runs every time my previous job completes. A test to see if my newly deployed Kubernetes cluster is conformant. We do this before deploying any other resources on the cluster. If this is broken, no point in moving on to app deployments.

- 4 - Storage Class Install - Some of my apps require having a storage class setup for my cluster. I don’t always need a storage class, but its nice to have one ready if I need it instead of having to stop my application testing to go configure a storage class quick. Lets do it from the start.

- 5 - Metal Load Balancer - I’m running on vSphere and I would still like to be able to use the Kubernetes LoadBalancer resources like I can with a Cloud Provider. Metal LB lets me do this. Again, not always needed, but lets set it up anyway.

- 6 - Contour Ingress Deployment - I’ve got a load balancer setup, so I might as well deploy an ingress controller as well. Contour just went to version 1.0 and seemed like a decent choice for a default ingress controller in my lab. Connected through my Metal LB deployed in the previous step.

- 7 - Install Helm and Tiller - This job deploys tiller into my Kubernetes cluster so I might deploy any Helm charts I find interesting.

- 8 - Install Prometheus, Grafana, Alertmanager - Next, I install a monitoring stack so I have some basic monitoring I can mess with.

- 9 - Install Elasticsearch, FluentD, Kibana - Lastly, I have a job to install an EFK stack for logging of my pods and critical Kubernetes components like the kubelets.

Now, I won’t always run this whole list when I’m building a cluster. It really depends on what I’m trying to do. For example, some times I’ll deploy my nodes via the Terraform job, so that I can then use Kubeadm manually and test configs. Or maybe I’ll get Kubernetes fully deployed, but not deploy Contour. I can manually deploy nginx or something else. Breaking these steps down into small chucks lets me pick and choose how far up the stack I want to go so that I can begin my testing.

In an automated production deployment, it probably makes sense to link all these jobs together, but for a training lab its really convenient to pick and choose the level of completeness of a build. Maybe this will inspire one of your projects.