In the previous post we deployed an NSX Manager. Now it’s time to start configuring NSX so that we can build cool routes, firewall zones, segments, and all the other NSX goodies. And even if we don’t want to build some of these things, we’ll need this setup for vSphere 7 with Kubernetes.

Add an IP Pool

The first thing we’ll setup is an IP Pool. As you might guess, an IP Pool is just a group of IP Addresses that we can use for things. Specifically, we’ll use these IP Addresses to assign Tunnel Endpoints (Called TEPs previously called VTEPs in NSX-V parlance) to each of our ESXi hosts that are participating in the NSX Overlay networks. The TEP becomes the point in which encapsulation and decapsulation takes place on each of the ESXi hosts. Think of it this way, when encapsulated traffic needs to be routed to a VM on a host, what IP Address do we need to send the traffic to, so that it can reach that VM. This is the TEP. We need to setup a TEP on each host, and the IP Addresses for these TEPs come from an IP Pool. Since I have three hosts, and expect to deploy 1 edge nodes, I’ll need a TEP Pool with at least 4 IP Addresses. Size your environment appropriately.

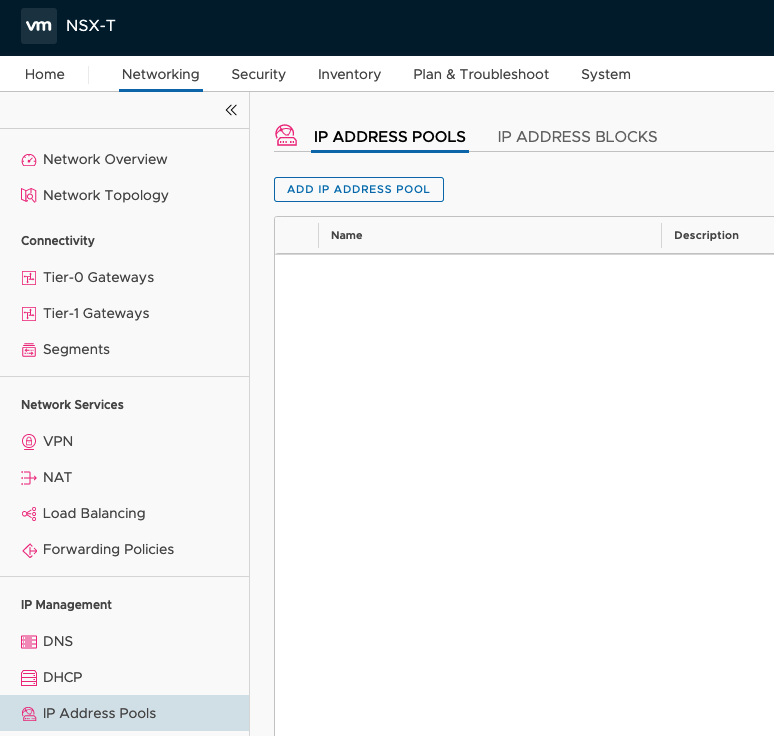

These IP Pools can be setup as DHCP or Static. Since this is a small lab we’ll walk through using static IP Addresses. To begin, navigate to the Networking tab and click IP Address Pools in the NSX Manager portal.

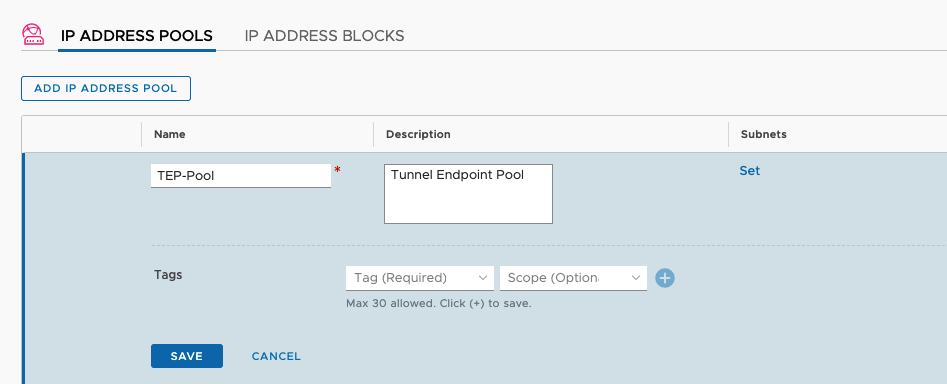

Click the ADD IP ADDRESS POOL button and then give the new pool a name and a description. Then click the Set hyperlink under subnets.

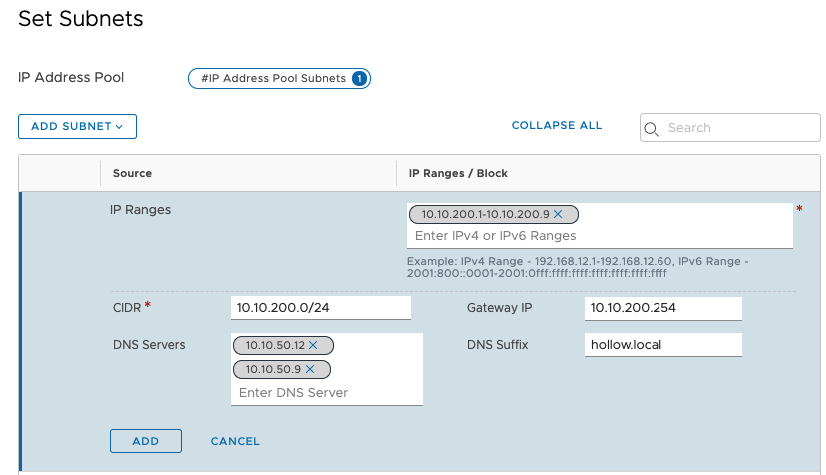

On the subnets setup screen you can add your pool IPs. For this I’ll use a range of IP Addresses in my 10.10.200.0/24 network which is my VLAN 200 NSX_TEP network. Add your range and click Apply.

Create a Transport Zone

Now it’s time to setup a Transport Zone. A Transport zone is a network that will, you guessed it, transport packets between nodes. And guess where those packets will land? Yep, on the Tunnel Endpoints. The way I think about a Transport zone is that it’s a grouping of hosts that are participating in NSX networks.

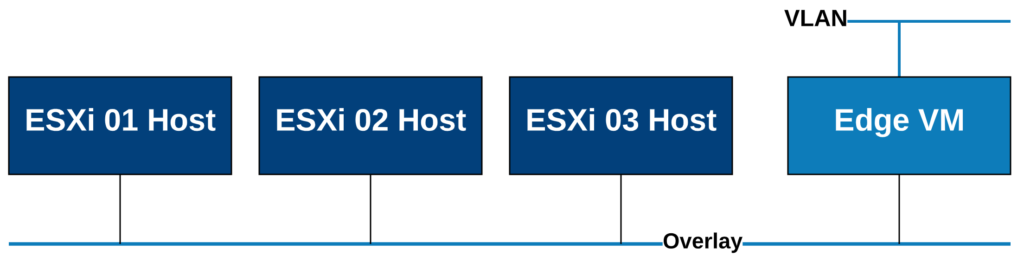

We have two types of Transport Zones. VLAN and Overlay.

Overlay - These are the networks created by NSX and carry the encapsulated geneve tunnels. When you create a new NSX-T segment, the encapsulated packets are passed via this overlay transport zone.

VLAN - These are networks backed by a VLAN used for communicating North/South with the physical network. These are commonly deployed on edge nodes so that the Overlay networks can route out to the physical network via an edge.

To give a graphical example of what we’re doing, see below.

To create our Transport Zones go to System –> Fabric –> Transport Zones in the NSX Manager UI. Click the + button to add a new transport zone. NOTE: there may be default zones created already. I’m ignoring those in my setup and creating my own.

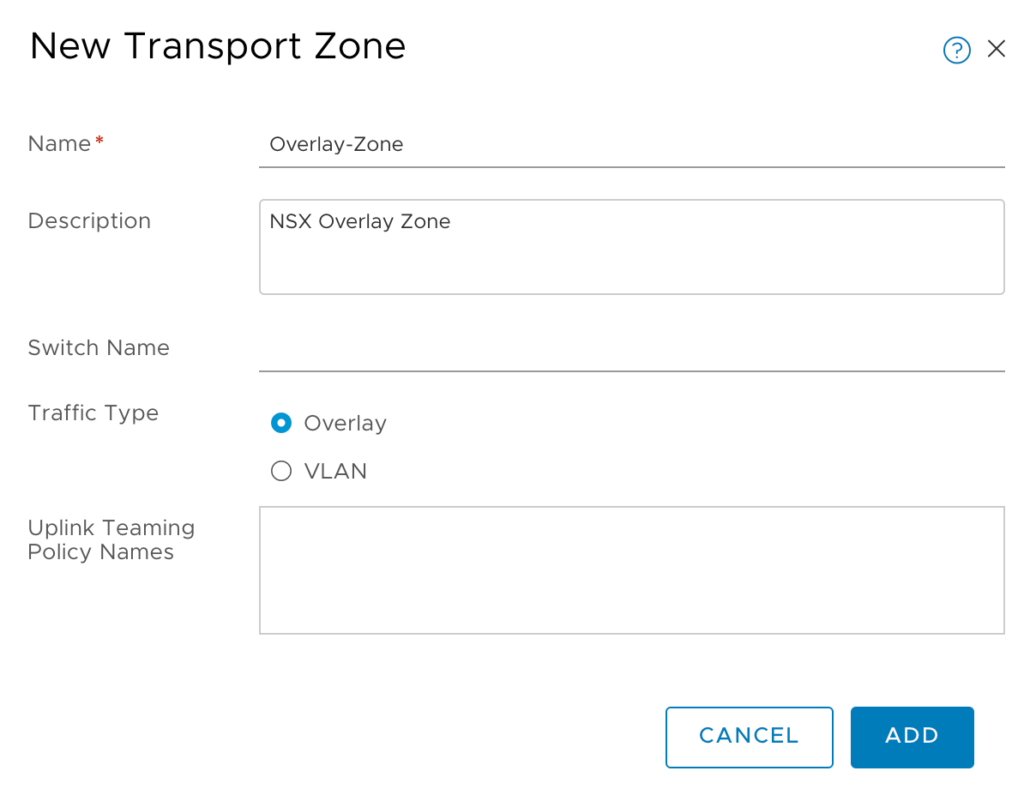

First I’ll create the Overlay Zone. Give it a name, description and a switch name. Then select the type of zone, in my case Overlay. Click Add.

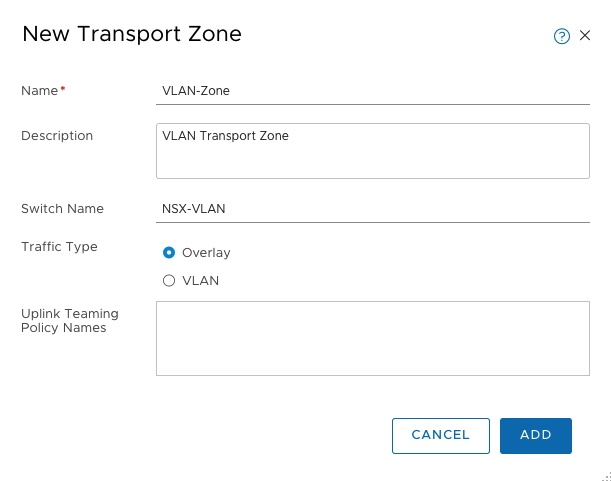

Create another zone, but this time make it a VLAN zone.

At this point we have enough transport zones to continue. Lets build some Transport Node profiles.

Uplink Profiles

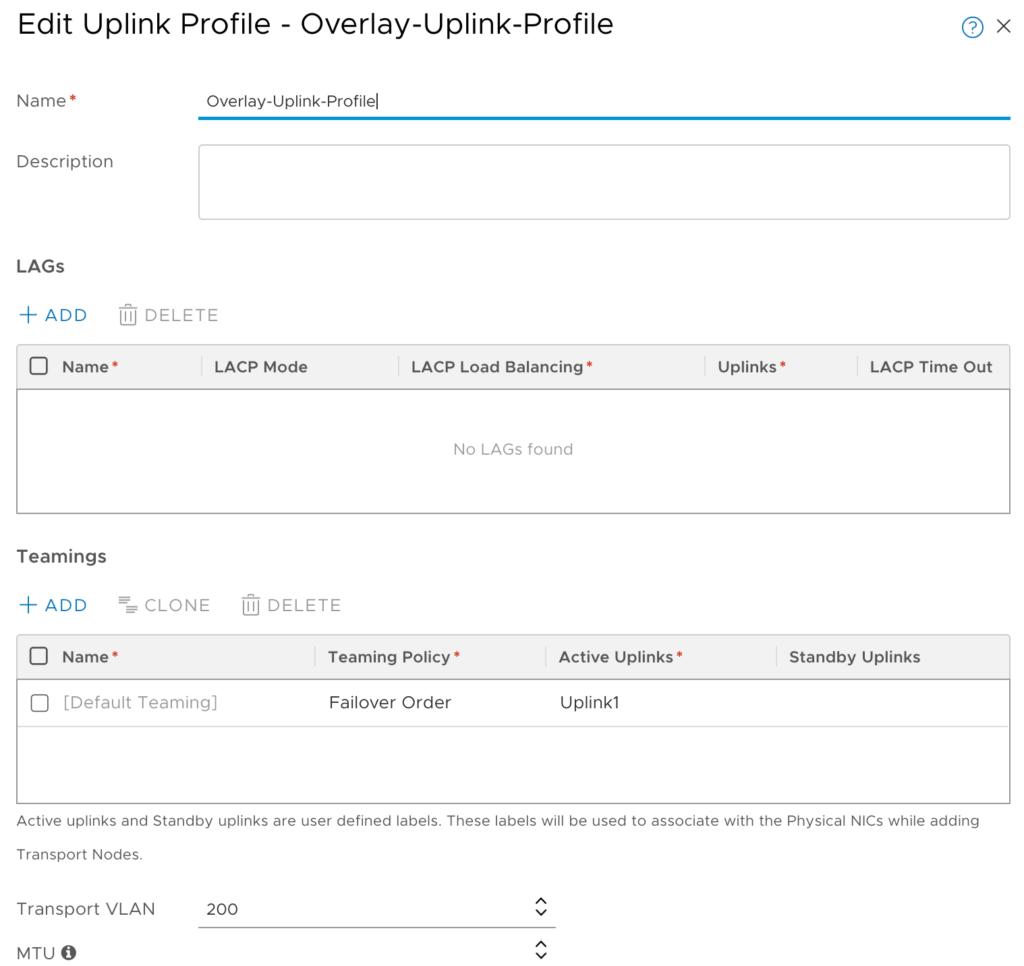

Uplink Profiles give you a way to set your teaming policies, and uplinks for any of the transport nodes you’ll be creating. Since this is a lab, the default uplink profiles might not be the best fit. I’m using a single NIC which you should not do for a production environment so I’ll create a custom uplink profile.

In the NSX Manager go to System –> Fabric –> Profiles and then click the + under the Uplink Profiles page.

You can see from my screenshot below, that I’ve given it a name and added a single nic to the active uplinks. That nic is named vmnic1 which is the vmnic on my distributed switch. My overlay network is on VLAN 200 so the Transport VLAN field needs to be set to 200. Save your configuration.

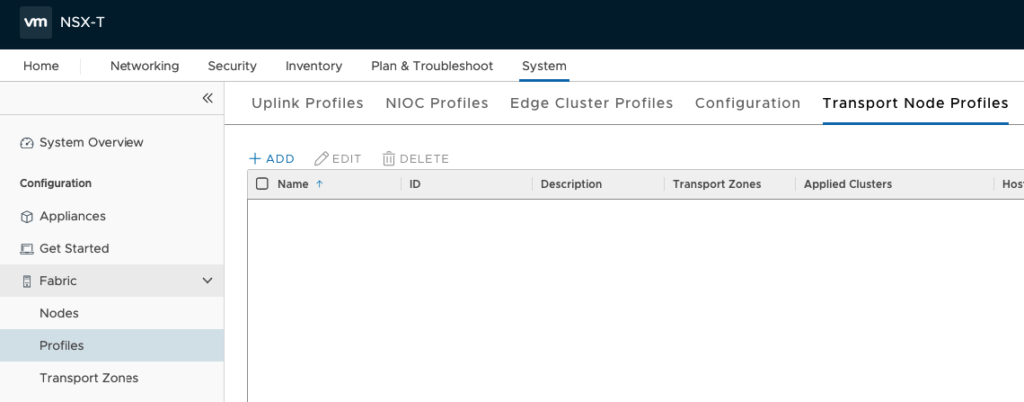

Transport Node Profile

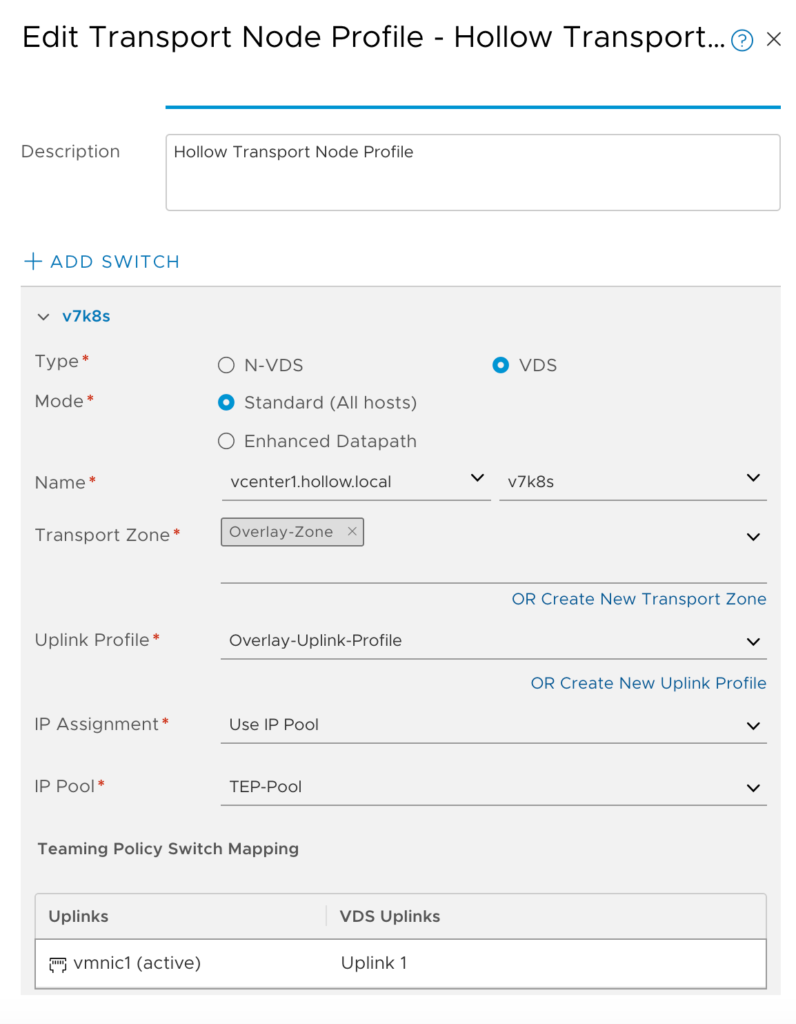

The transport node profile is used to provide configuration for each of the ESXi nodes. The profile specifies which NICs on the nodes need to be configured for the VDS switch. It also specifies the IP Addresses assigned for the TEP on this switch.

Navigate to the System –> Fabric –> Profiles page. Then click +ADD.

Give the profile a name, and description. Then click VDS as the switch type. You’ll then select your computer manager and the name of the VDS. Then select an uplink profile from the list of defaults. I’ve chosen the Overlay profile from above. Under IP Assignment select Use IP Pool and then under IP Pool, select the TEP Pool we created earlier.

Lastly, in the uplinks, you must specify an ESXi Physical NIC that the VDS switch will use as a physical uplink. In my lab I have Uplink1 for my uplink NIC on the VDS.

NOTE: Each ESXi host could be configured differently if they are non-uniform. You would need to configure each node individually instead of as a full cluster. Transport Node Profiles make this a snap as long as you’ve got uniformed infrastructure (meaning the same vmnic is used on each ESXi host in your Transport Zone).

We’re prepared to configure our nodes now. Lets push the configurations down to the nodes to prep them for use.

Configure Transport Nodes

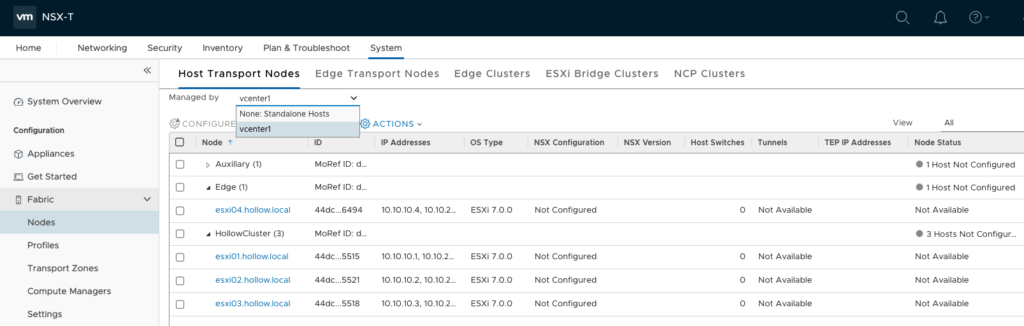

Now that we’ve got a profile, we’ll go to System –> Fabric –> Nodes. Under the Managed by drop down, select your Compute Resource (vCenter). Click +ADD.

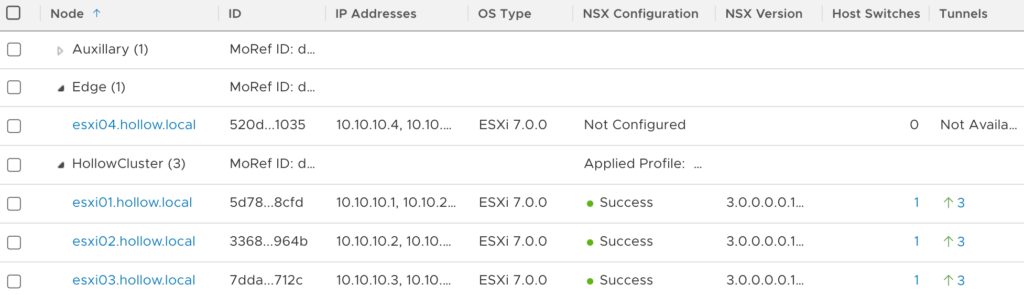

You should see your list of clusters. I’ve expanded HollowCluster cluster and you can see the nodes are not configured. Select the cluster that will be configured with your transport node profile created above.

NOTE: You do not need to configure the edge ESXi host, just the workload nodes.

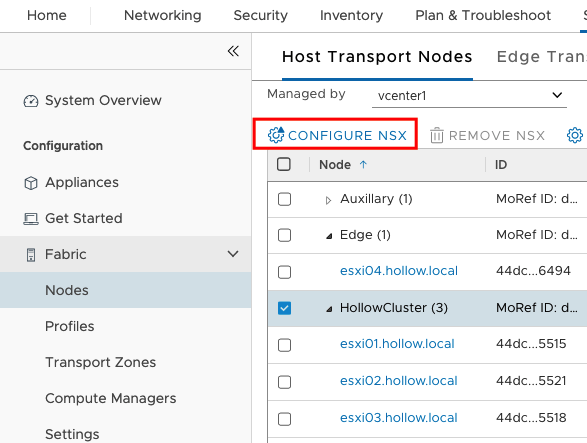

Click the CONFIGURE NSX button.

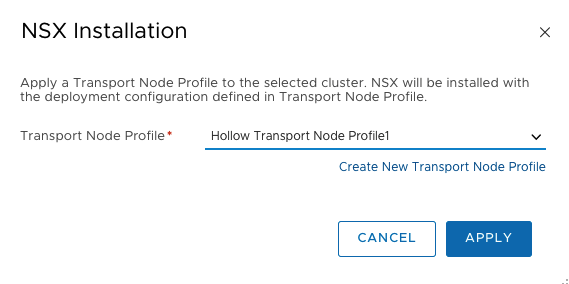

Select the Transport Node Profile we created earlier and then click APPLY.

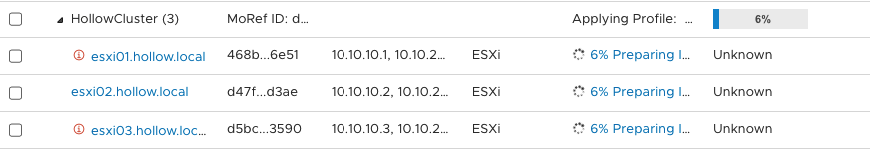

NSX will soon be configuring things on your ESXi hosts.

When complete you should see success listed next to each of the nodes in the cluster.

Summary

In this post we configured our nodes with our Transport zone and configured our profiles to configure the virtual switches and NICs on the ESXi nodes. In the next post we’ll move to our Edge Nodes.