This post describes the lab environment we’ll be working with to build our vSphere 7 with Kubernetes lab and additional prerequisites that you’ll need to be aware of before starting. This is not the only topology that would work for vSphere 7 with Kubernetes, but it is a robust homelab that would mimic many production deployments except for the HA features. For example, we’ll only install one (singular) NSX Manager for the lab where in a production environment would have three.

This post will describe my vSphere home lab environment so that you can correlate what I’ve built, with your own environment.

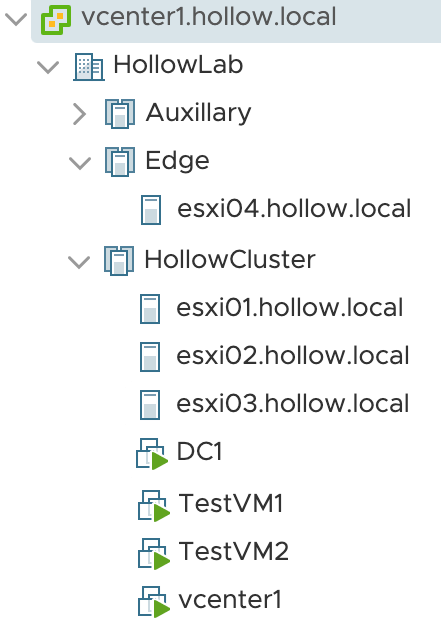

vSphere Environment - Cluster Layout

My home lab has three clusters in it. Each of which consists of ESXi hosts on version 7 and a single vCenter server running the version 7 GA build.

The lab has a single auxilary cluster that I use to run non-essential VMs that I don’t want hogging resources from my main cluster. We’ll ignore that cluster for this series. The other two cluster will be important.

I have a three node hollowlab cluster that I’ll use to host my workloads and general purpose VMs. This cluster must have vSphere HA turned on, and VMware Distributed Resource Scheduler (DRS) enabled in Fully Automated mode. This means that you’ll need to have vMotion working between nodes in your workload cluster. HA and DRS are requirements for vSphere 7 with Kubernetes clusters. More details about size/speed/capacities are found here.

Lastly, I have a third cluster, named edge, with a single ESXi host that will run my NSX-T Edge Virtual Machine. This VM is needed to bridge traffic between the physical network and the overlay tunnels created by NSX. You can deploy your edge VM within your workload cluster if you need to, but highly recommended to have edge nodes on their own hardware. Edge nodes can become network hotspots since overlay traffic has to flow through this VM for North/South traffic and load balancing.

Here is a look at my cluster layout for reference.

Physical Switches

In my lab I’m running an HP switch that has some layer three capabilities. To be honest, it doesn’t matter what you’re running, but you’ll need to be able to create VLANs, and route between them. However you want to do this is fine, but if you follow along with this series, you’ll need VLANs for the purposes in the table below. I’ve listed my VLAN numbers and VLAN Interfaces for each of the networks so you can compare with your own.

NOTE: I see you judging me for my gateway addresses being .254 instead of .1. Just let it go.

VLAN Purpose****VLAN #****Interface IPManagement15010.10.50.254/24Tunnel Endpoint (TEP)20010.10.200.254/24NSX Edge VLAN20110.10.201.254/24v7wk8s VLANS

I have trunked (802.1q) the VLANs down to my ESXi hosts so that they can be used with my virtual switches. You will need to make sure to configure your ports so that the ESXi hosts can sent tagged packets on these vlans.

Now, for any Overlay networks, you will need to ensure that you have Jumbo Frames enabled. This means, that your Interfaces, Switches, or Distributed virtual switches must accept an frames of 1600 mtu or larger. This is an NSX requirement. Be sure to enable jumbo frames across your infrastructure.

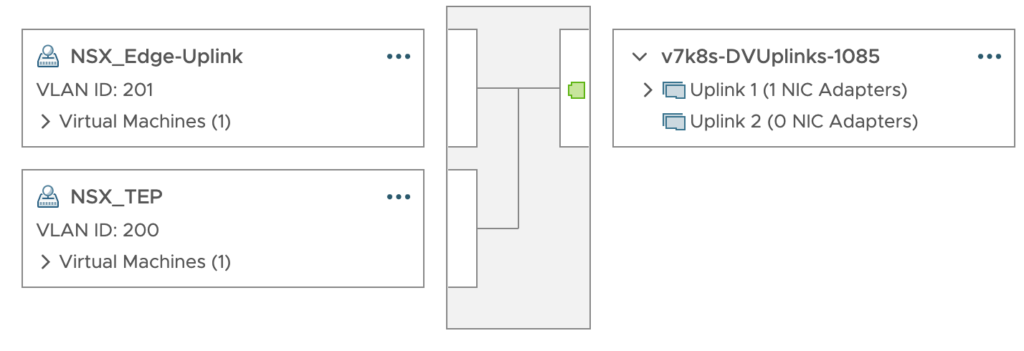

vSphere Virtual Switches

There is more than one way to setup the NSX-T virtual switching environment. For my lab, I’ve setup management, vMotion, vSAN, and NFS networks on some virtual switches. For those items, it doesn’t matter what kind of switches they’re on. These could be on standard switches and these are probably portgroups you’ve setup for all vSphere environments.

For the NSX components, I’ve deployed a single vSphere 7 distributed switch (VDS) across both my workload cluster and the edge cluster. I’ve created two portgroups on the VDS which will be used by the edge nodes deployed later in the series.

From the screenshot below, You can see I’ve created an NSX_Edge-Uplink portgroup and an NSX_TEP porgroup. Each of these portgroups are VLAN tagged with the VLANs shown in the table from the previous section. You can have more portgroups on this switch if you’d like. As you create new NSX-T “segments” they will appear as portgroups on this switch.

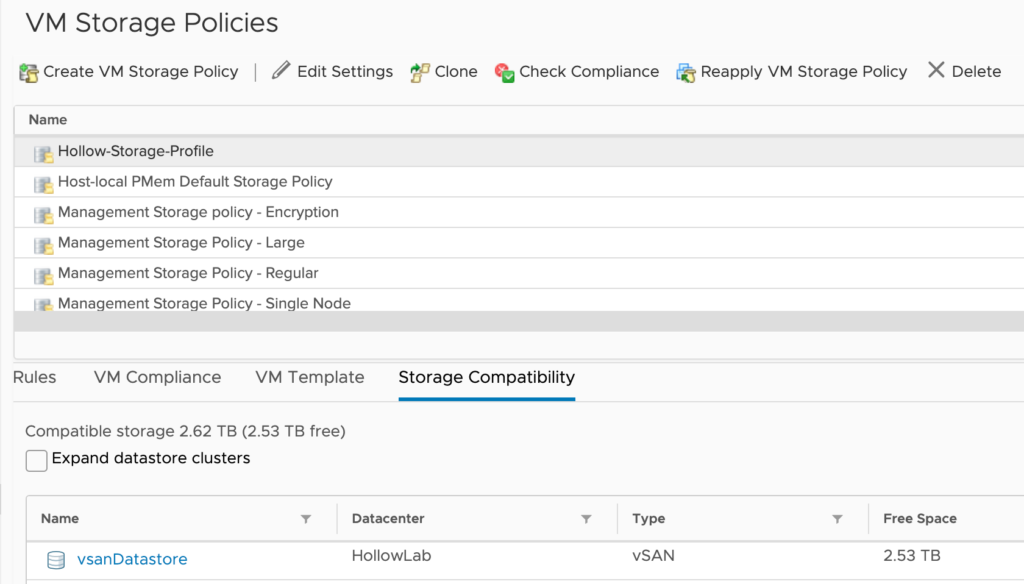

Storage

This lab is using VMware VSAN for storage of the workload virtual machines and thereby the Supervisor Cluster VMs. You should be able to use other storage solutions, but you’ll need to have a VMware storage policy that works with your datastores. I’ve created a storage policy named Hollow-Storage-Profile that will be used for my build.

Licenses

You will need to have some advanced licenses for some of the components. Specifically, NSX-T requires an NSX-T Data Center Advanced or higher license. Also, the ESXi hosts will need a VMware vSphere 7 Enterprise Plus with Add-on for Kubernetes license for proper configuration.