Its 2020 and I’ve had plenty of time at home due to the social distancing and global pandemic going on. I’ve also been putting off purchasing any new home gear, thinking to myself that maybe the cloud only model will be my next lab, but it isn’t yet. Due to the work I’ve been doing with vSphere 7 and Kubernetes clusters, I couldn’t avoid updating my hardware any longer. Here’s the updated home lab for any enthusiasts.

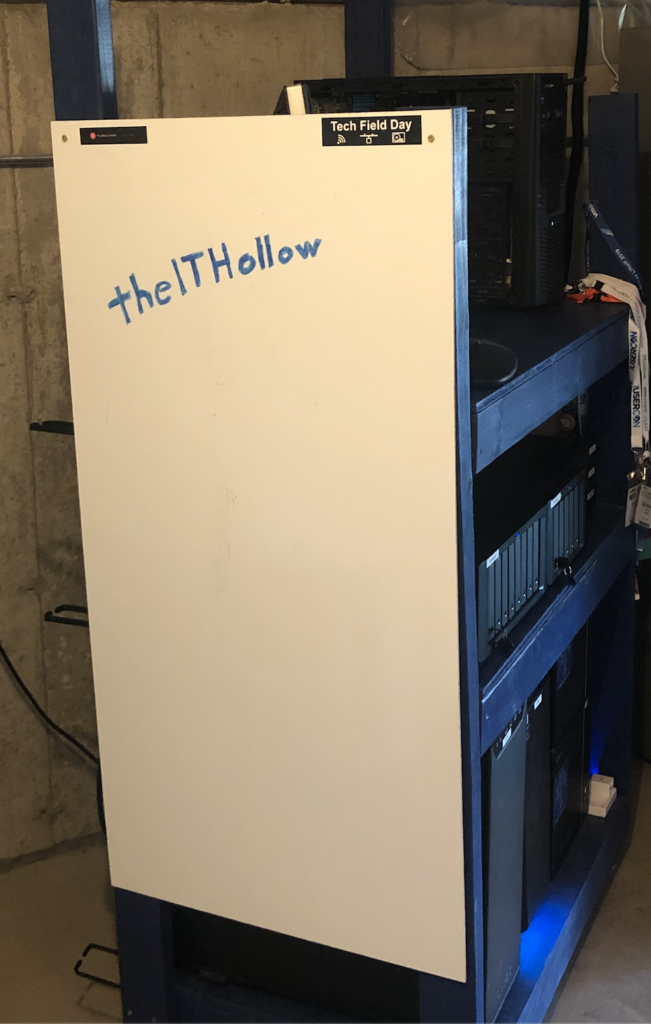

Rack

The rack is custom made and been in use for a while now. My lab sits in the basement on a concrete floor. So I built a wooden set of shelves on casters so I could roll it around if it was in the way. I place the UPS on the shelf so that I can unplug the power to move the lab. As long as I have a long enough Internet cable, I can wheel my lab around for as long as the UPS holds on. On one side I put a whiteboard so I could draw something out if I was stuck. I don’t use it that often, but I like that it covers the side of the rack.

On the back of the shelves, I added some cable management panels.

Power

As mentioned, I have a UPS powering my lab. It’s a CyberPower 1500 AVR. I’m currently running around 500 Watts for the lab under normal load. I’ve mounted a large power strip along the side and a few small strips on each shelf. I also bought some 6 inch IEC cables which really cuts down the cable clutter behind the lab.

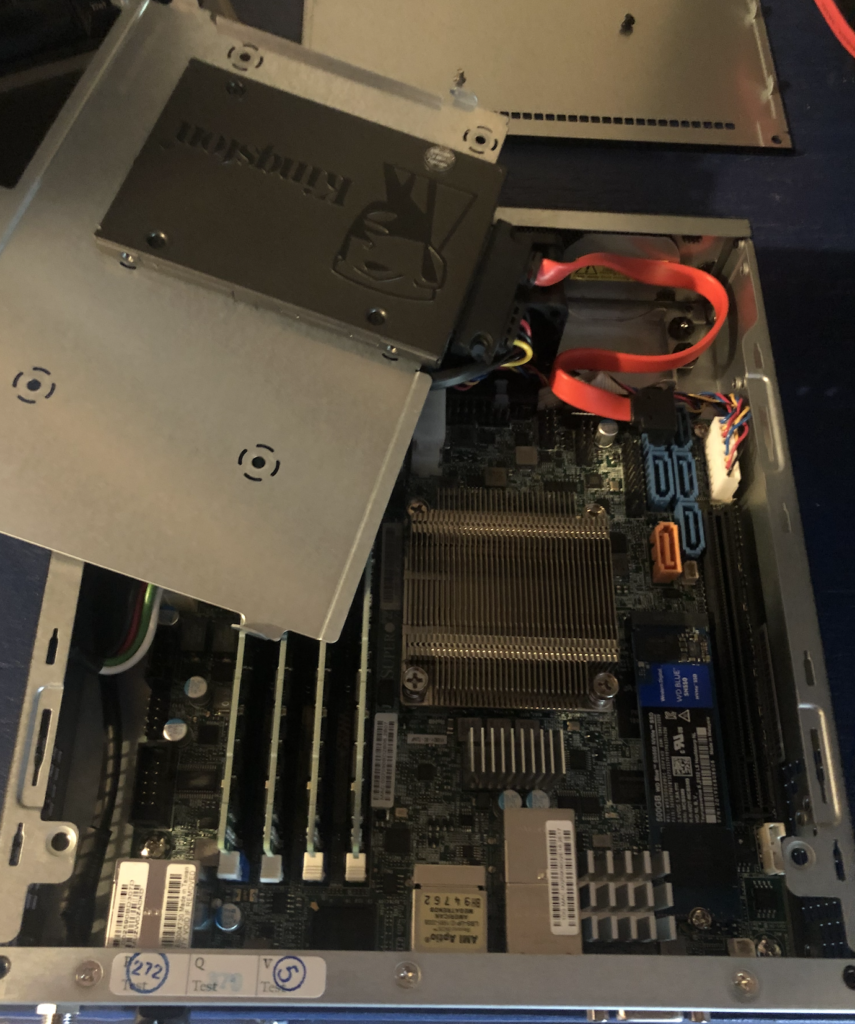

Compute

I bought new compute so that I could run the vSphere 7 stack, complete with NSX-T, Tanzu Kubernetes Grid, and anything else you can think of. So I bought three new E200-8d Supermicro servers with a six core Intel processor and 128 GB of memory.

For local storage I use a 64 GB USB drive for the ESXi host OS disk and I added a 1 TB SSD and a 500 GB NVMe drive. These drives are added for capacity and caching tiers for VMware vSAN. There isn’t a lot of room for disk drives in this model, but they sure are compact enough to fit on a shelf.

These servers have two 10GbE NICs, two 1 GbE NICs, and an IPMI port for out of band management of the server. I wanted to be sure to have a way to power on and off the server, load images into a virtual cd-rom, etc. I was disappointed to find out that Supermicro now charges a license on top of the motherboard for these features. I ended up paying for the licenses, but will be sure to remember this the next time I go server shopping.

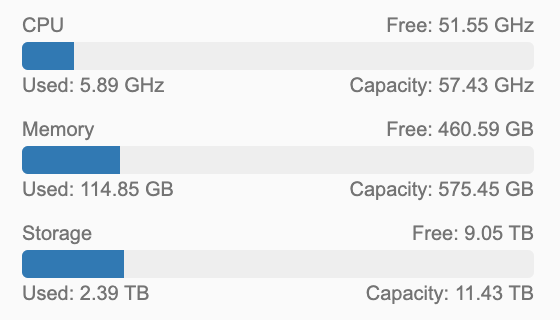

I have two other servers using spare parts I had lying around. One of these is six core, the other a four core. Between them another 192 GB of RAM. These are also running vSphere 7 but in different clusters. The totals in vCenter are shown below.

Shared Storage

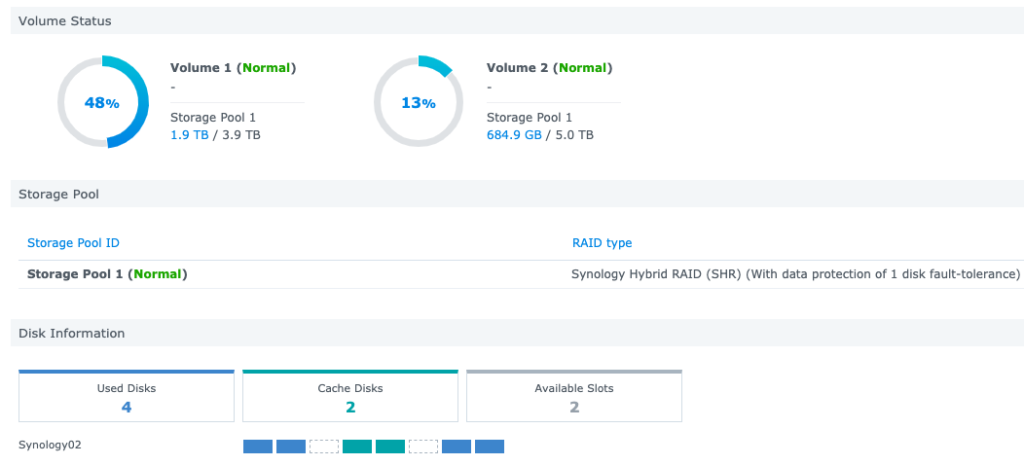

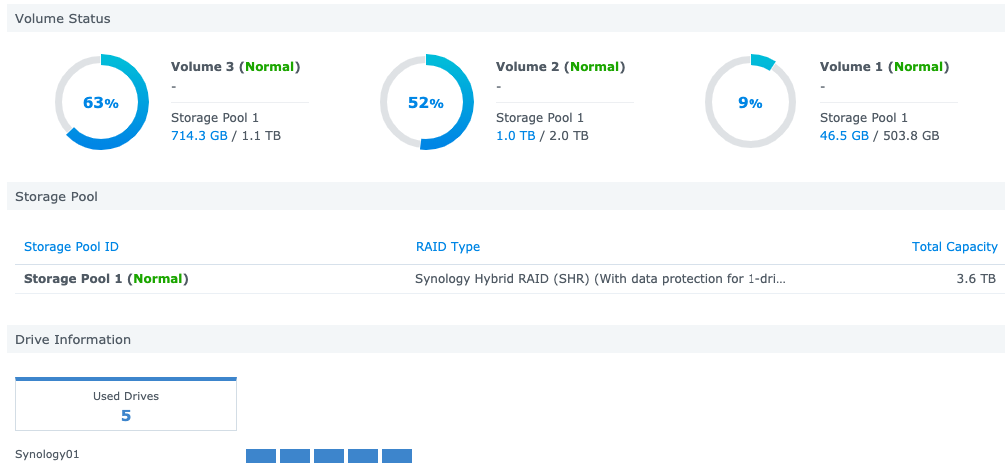

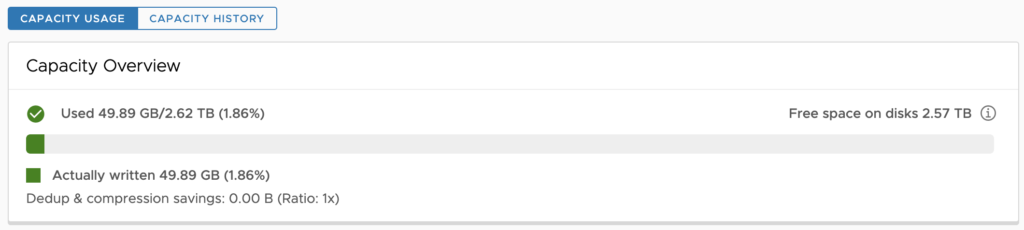

For Storage, I have a tiered system. I have an eight bay Synology array used for virtual machines and file stores. Then I have a secondary Synology used as a backup device. Important information on the large Synology is backed up to the smaller one, and then pushed to Amazon S3 once a month for an offsite.

- vSphere Storage Array: Synology DS1815+

- 8 TB available of spinning disks with dual 256 GB SSD for Caching

- File Storage and Backup Array: Synology DS1513+

- 3.6 TB available of spinning Disk

For machines I’m building over and over and want fast performance, I have a VSAN datastore with linked clones. This lets me spin up linux VMs in about 90 seconds from template. vSAN has become my place for ephemeral data. Sometimes I turn off vSAN if I’m not using it so I can power down ESXi nodes via DPM to cut down on power usage.

Network

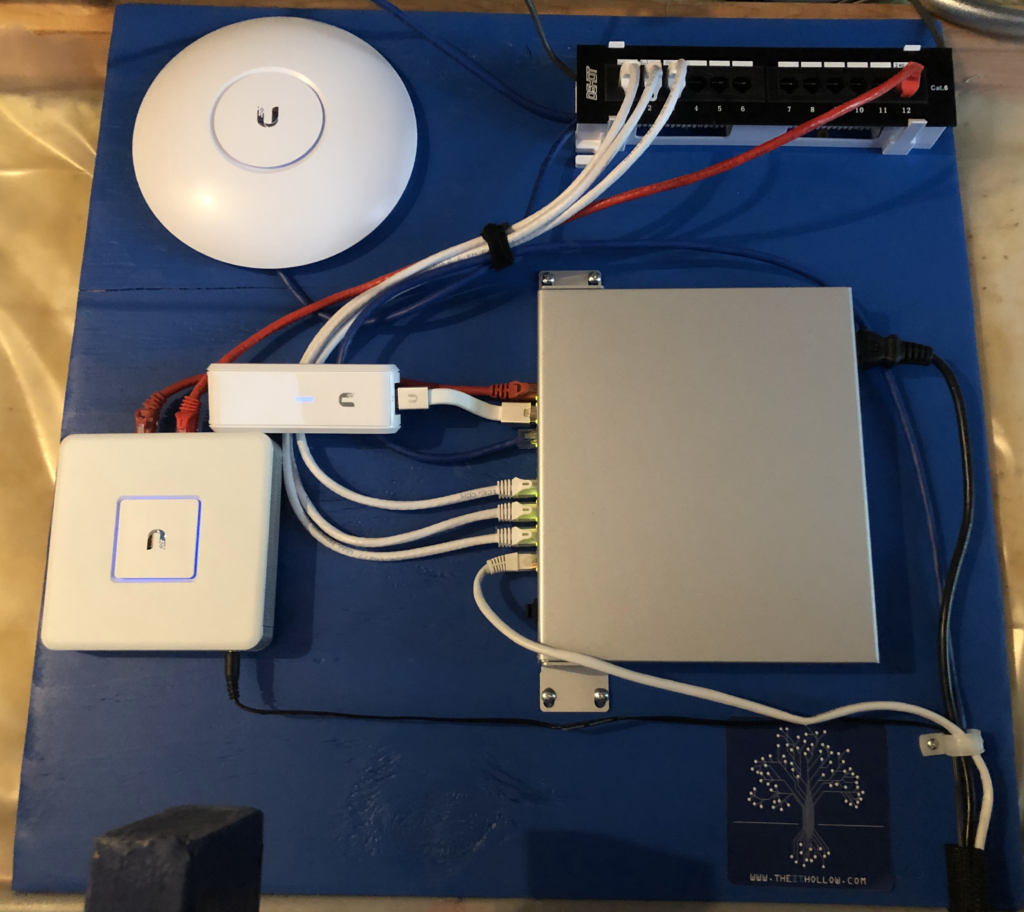

My networking gear hasn’t been updated much. I love the Ubiquiti network devices for wireless. The Edge gateway serves as a perimeter firewall, and a nice gate between my wireless guests and the homelab. This lets me work on the lab even when the Playstation is running full throttle. :)

I mounted my basement access point, USG and PoE switch to a piece of plywood and mounted it with a patch panel.

- Core Switch: HP v1910-24G Ethernet Switch

- Wireless Switch: Ubiquiti UniFi 8 POE-150W

- Storage/vMotion Switch: Netgear XS708E 10 Gigabit

- That switch was a gift from fellow vExpert Jason Langer /2016/12/19/unbelievable-gift-home-lab/

- Wireless Firewall: Ubiquiti UniFi Security Gateway

- Wireless: Ubiquiti AC Pro

- Controller: UniFi Cloud Key

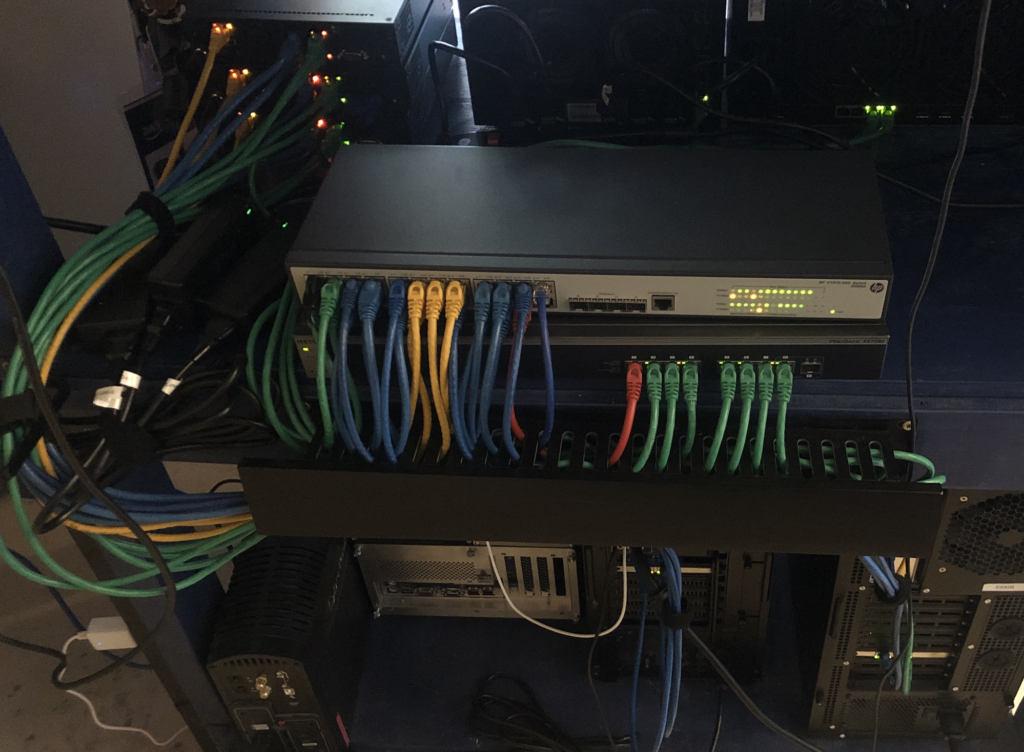

The cables are colored according to purpose.

- Yellow - Management Networks and Out of Band access.

- Green - Storage and vMotion Networks (10GbE)

- Blue - Trunk ports for virtual machines

- Red - Uplinks

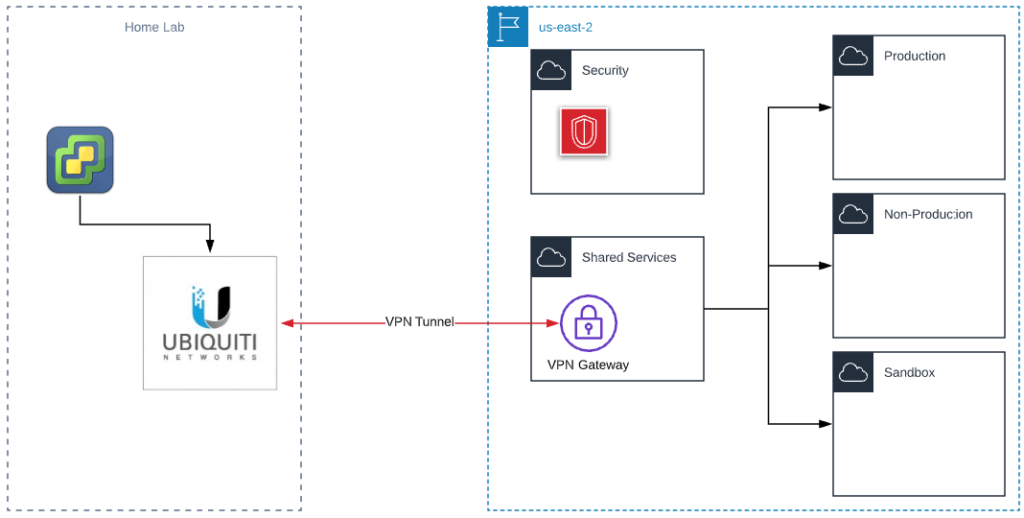

Cloud

I’ve decided to use Amazon as my preferred cloud vendor. Mainly because I’ve done much more work here than on Azure. My AWS Accounts are configured in a hub spoke model which mimics a production like environment for customers.

I use the cloud for backup archival, and just about anything you can think of that my homelab either can’t do or doesn’t have capacity for. I like to use solutions like Route53 for DNS so a lot of times my test workloads still end up in the cloud. Most of the accounts below are empty or have resources that don’t cost money, such as VPCs.

My overall monthly spend on AWS is around $35, most of which is spent on the VPN tunnel and some DNS records.