If you have been following the series so far, you should have a TKG guest cluster in your lab now. The next step is to show how to deploy a simple application and access it through a web browser. This is a pretty trivial task for most Kubernetes operators, but its a good idea to know whats happening in NSX to make these applications available. We’ll walk through that in this post.

Connect To TKG Cluster and Deploy an Application

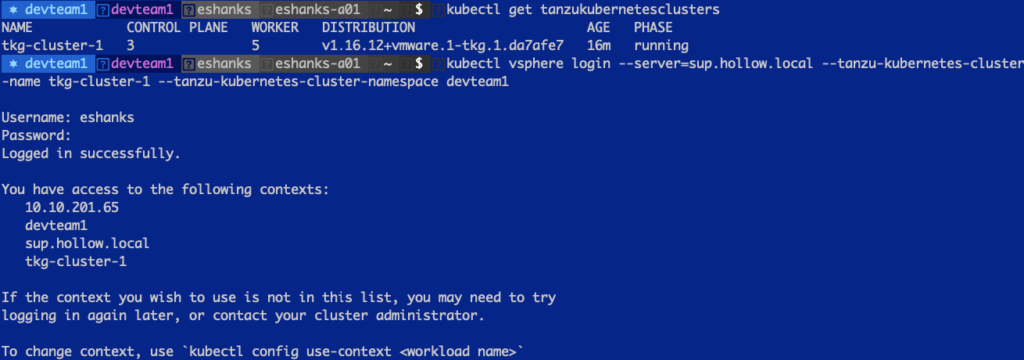

Before we can deploy our applications, let make sure we can connect to the cluster as we did in the previous post. Lets run the following command to make sure we’ve been authenticated with our Kubernetes cluster.

kubectl vsphere login --server=[SupervisorControlPlane] --tanzu-kubernetes-cluster-name [tkg cluster name] --tanzu-kubernetes-cluster-namespace [Supervisor Namespace]

Once authenticated, set the Kubernetes context to the TKG Cluster.

kubectl config use-context tkg-cluster-1

Now you’re ready to run Kubernetes commands to build apps, secrets, expose services, and so on. Let’s start by deploying a test application to the guest cluster.

kubectl run hollowapp --image=theithollow/hugoapp:v1

It might take a moment for the container image to download from dockerhub, but once done you should be able to see running containers by running the command:

kubectl get pods

Expose Applications

Now that there is an application running in our TKG cluster, we need to expose it to our users so that they might be able to access it through a web browser for example.

The simplest way to to do this is through an imperative command against the cluster. The command below will create a Kubernetes service of type `LoadBalancer` on port 80.

kubectl expose pod hollowapp --port=80 --target-port=80 --name hollowapp --type=LoadBalancer

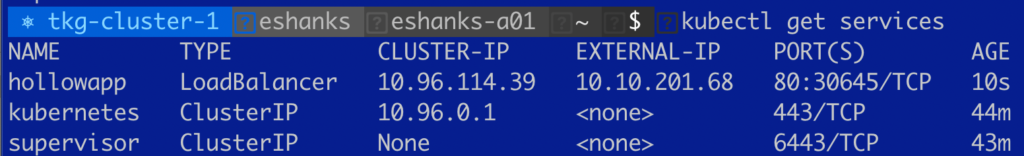

After running the command, lets take a look at what happened. First, let’s check our services in the Kubernetes cluster by running:

kubectl get services

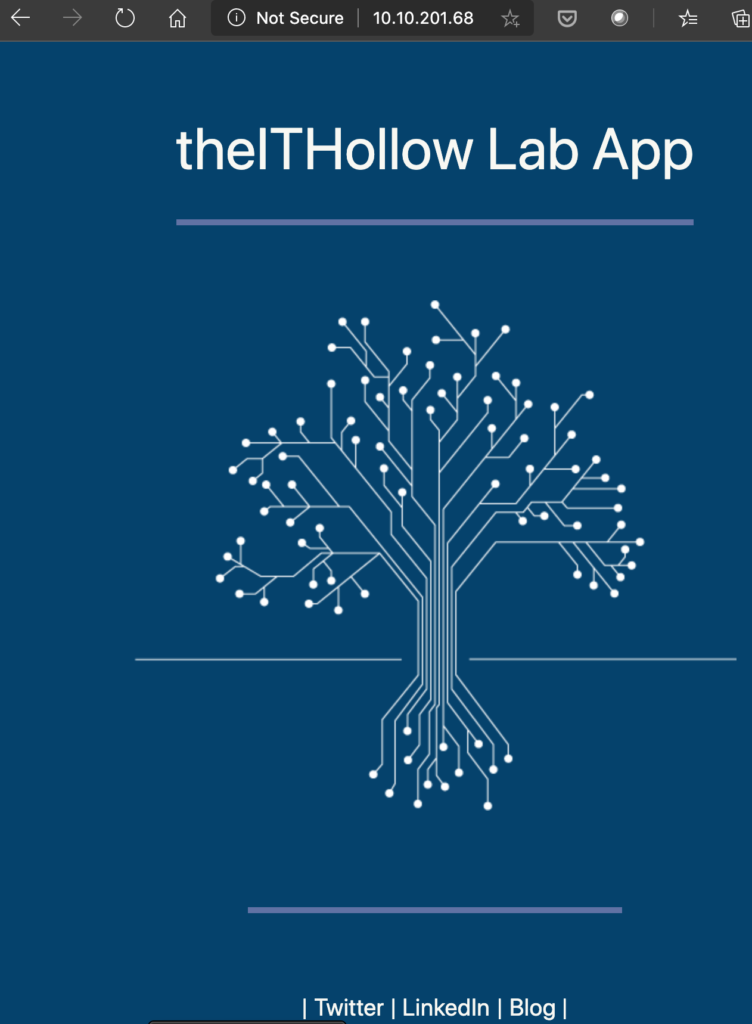

Hey! Thats neat! There is a new service listed called “hollowapp” and it’s of type LoadBalancer and you can see it has an External-IP listed. Try navigating to that IP Address in a web browser. Here’s what I saw when I tested in my browser.

Awesome! We have our first application deployed in a Tanzu Kubernetes Grid cluster and it’s doing the magic of setting up ingress routing to our apps through NSX-T.

Let’s take a look at what NSX-T object got created to support this new application for ingress routing.

NSX-T Resources

So, somehow by creating a Kubernetes service of type LoadBalancer NSX was able to route traffic directly to the pod within the TKG Guest Cluster we provisioned. How does it do this?

Well, TKG clusters come equipped with the NSX Container Plugin (NCP) which listens for certain API calls such as a LoadBalancer being requested. When the plugin sees a call for a load balancer, it informs the NSX-T Control plane how to build a load balancer for this specific service.

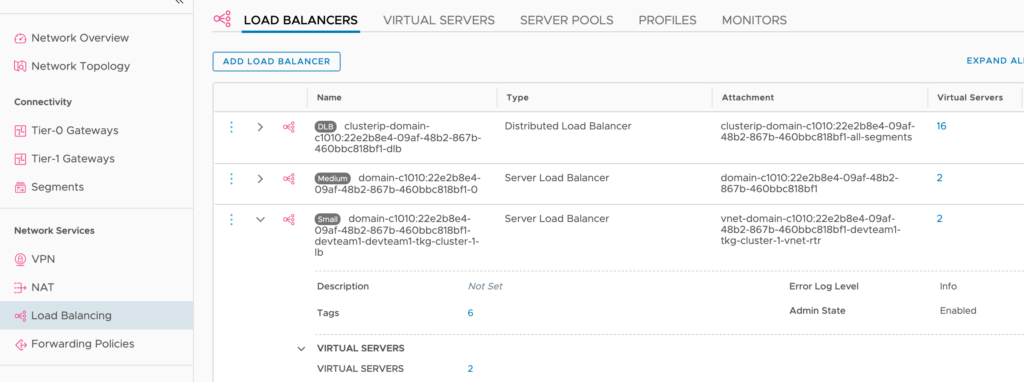

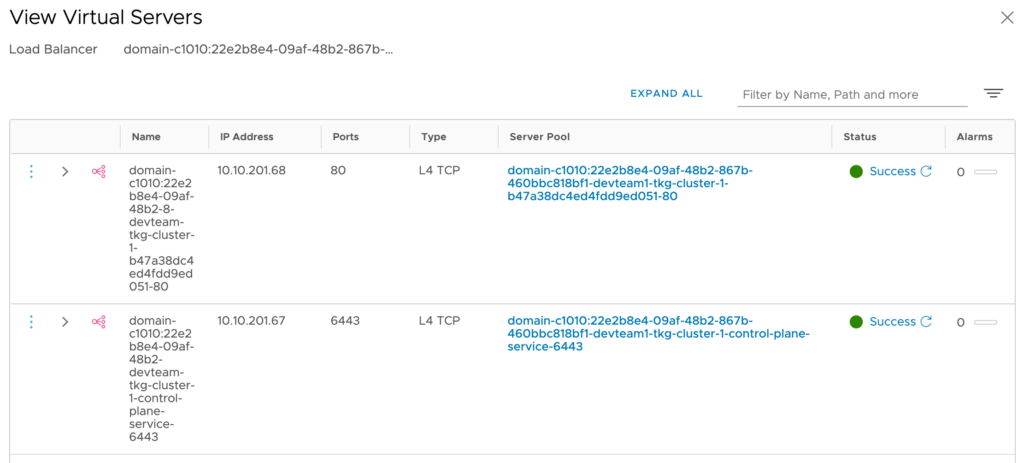

The result is that NSX-T creates a virtual server for the application. If you look in the NSX-T Manager console, under Networking –> Load Balancing, you’ll see several load balancers already created. Specifically, you’ll notice a Load Balancer is configured for each cluster to route traffic to the Kubernetes API for each cluster. Here, I’ve expanded the TKG Guest Cluster Load Balancer, and you can see it has 2 virtual servers.

After clicking the Virtual Server hyperlink in the load balancer object, I can now see there are 2 virtual servers. One of these is on port 6443 which is the Kubernetes Control Plane API access. The other was provisioned for the application that we exposed as a type LoadBalancer.

You might be wondering about other types of access methods such as an Ingress resource. These are supported in Tanzu clusters, but at this time require an ingress controller, such as Contour, to be deployed in your clusters first. You can of course expose the ingress controller itself, though a load balancer as we’ve done in this post.

Summary

Tanzu Kubernetes Grid Guest Clusters with NSX-T integration can really save you some time when setting up Load Balancing. Simply create your apps and deploy a service of type LoadBalancer and let the NSX Controller Plugin and NSX-T do the rest of the work for you.