This post will focus on deploying Tanzu Kubernetes Grid (TKG) clusters in your vSphere 7 with Tanzu environment. These TKG clusters are the individual Kubernetes clusters that can be shared with teams for their development purposes.

I know what you’re thinking. Didn’t we already create a Kubernetes cluster when we setup our Supervisor cluster? The short answer is yes. However the Supervisor cluster is a unique Kubernetes cluster that probably shouldn’t be used for normal workloads. We’ll discuss this in more detail in a follow-up post. For now, let’s focus on how to create them, and later we’ll discuss when to use them vs the Supervisor cluster.

Gather Deployment Information

These steps assume that you’ve followed the series so far and have configured the prerequisites such as a Supervisor Cluster, a namespace, a content library, and a user with edit permissions.

The steps to deploy a new TKG cluster consists of running a single command from the CLI.

kubectl apply -f tkgcluster.yaml

Yeah, that’s it. If you’re a Kubernetes operator, this command is going to seem very familiar! We’re deploying entire Kubernetes clusters based off of a desired state expressed by a YAML file. This means that building a TKG cluster really consists of us gathering the information we need to layout the desired state.

Let’s look at the contents of a TKG YAML file and then begin to fill in some desired state information.

apiVersion: run.tanzu.vmware.com/v1alpha1

kind: TanzuKubernetesCluster

metadata:

name: [clustername]

namespace: [namespace-name]

spec:

distribution:

version: [v1.16]

topology:

controlPlane:

count: [3]

class: [guaranteed-large]

storageClass: [tkc-storage-policy-yellow]

workers:

count: [5]

class: [guaranteed-xlarge]

storageClass: [tkc-storage-policy-green]

The items in brackets [] are items we need to fill in to create a new cluster. Most of these items seem pretty self explanatory. For example, how many control plane nodes and worker nodes the Kubernetes clusters should have. The clustername is completely up to you, the namespace must match the namespace deployed in the Supervisor Cluster that you have edit permissions on.

Now, lets discuss a few fields that might need a bit more explanation.

NOTE: For full descriptions of ALL fields, consult the official VMware documentation here.

Version

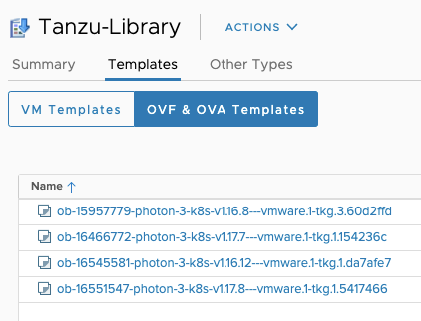

Version is the Kubernetes version that will be deployed. This is a nice feature since you can have multiple versions of Kubernetes clusters all managed from the Supervisor Cluster. A short version such as 1.16 can be used, or you can specify the image name of the kubernetes version. These image names come from the Content Library templates.

Class

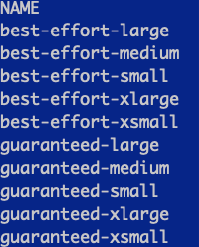

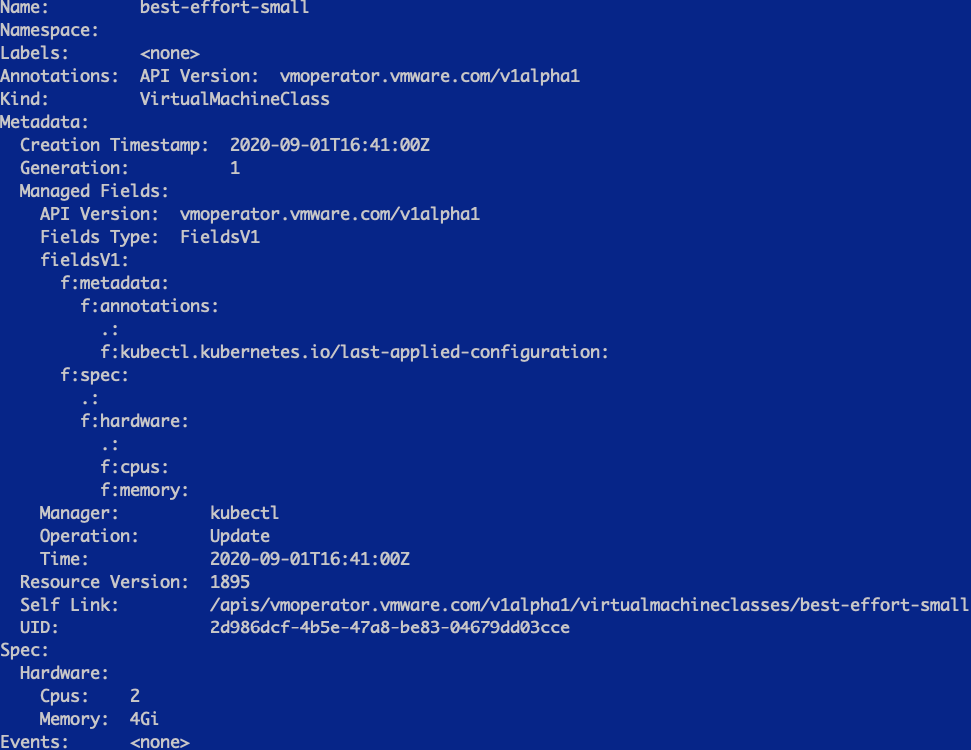

Class refers to the pre-set sizes of the nodes. You can specify different size VMs for the control plane nodes and worker nodes. So how do you know what classes are options for your cluster? Well, you could look at the official documentation here. Or you could look at the resources in the supervisor cluster.

kubectl get virtualmachineclasses

If you want details about those objects, you can run a describe to find more information, just like you would in a normal Kubernetes environment.

kubectl describe virtualmachineclasses best-effort-small

StorageClass

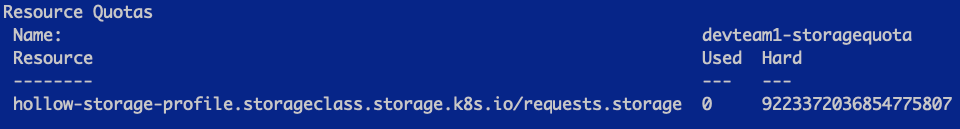

Storage Classes are used by Kubernetes to know how to create persistent volumes. In the TKG clusters, we specify the vSphere Storage Policy as a storageclass so that the clusters will understand how to provision persistent volumes on vSphere datastores.

The names of the storage classes can be obtained by running the following command from the Supervisor cluster namespace:

kubectl describe ns

Create a TKG Cluster

OK, I’ve filled out my entire TKG YAML manifest and I’m ready to create my cluster. Here is the YAML I’m deploying for my cluster.

apiVersion: run.tanzu.vmware.com/v1alpha1

kind: TanzuKubernetesCluster

metadata:

name: tkg-cluster-1

namespace: devteam1

spec:

distribution:

version: v1.16

topology:

controlPlane:

count: 3

class: best-effort-small

storageClass: hollow-storage-profile

workers:

count: 5

class: best-effort-small

storageClass: hollow-storage-profile

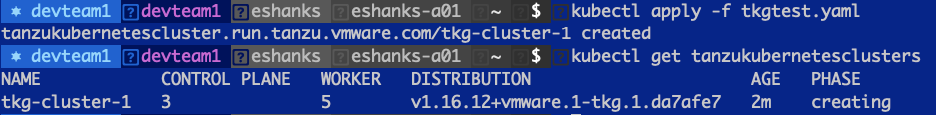

After logging into the Supervisor cluster, I can simply apply the manifest to create the cluster.

kubectl apply -f [filename].yaml

Once applied, you can check the status of your cluster by running

kubectl get tanzukubernetesclusters

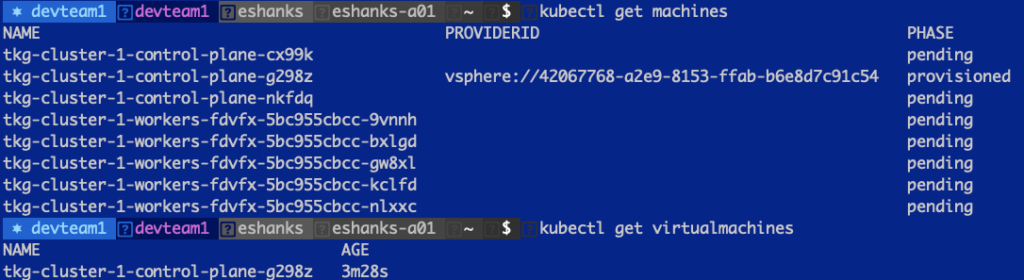

There are also a few other resources that might be checked for troubleshooting purposes. You can also list the machines and virtual machine objects.

kubectl get machines

kubectl get virtualmachines

These two objects can help identify if the IaaS Provider is creating the resources correctly.

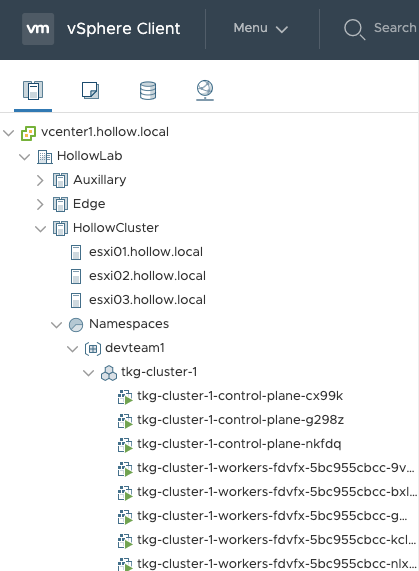

Once provisioning is over, you should see your cluster provisioned in vCenter, the get cluster command should show a provisioned cluster, and you can begin the fun work of building apps for your cluster!

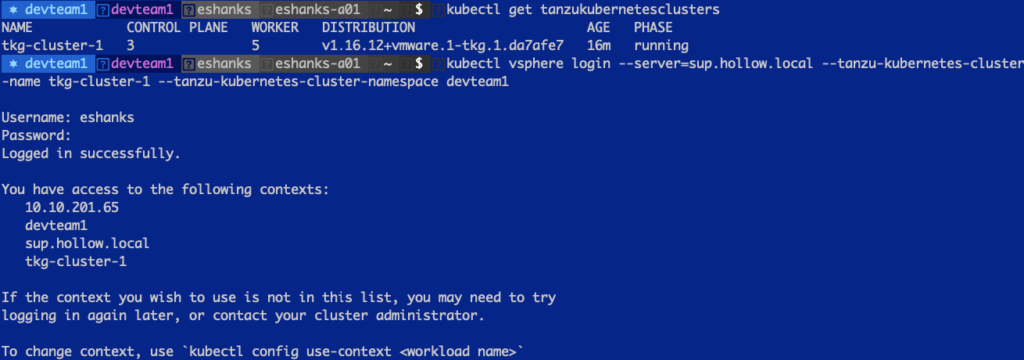

Connect to the TKG Cluster

OK, you want to know how to access your new cluster, right? Well, here you go. To login to the guest cluster you just created, run:

kubectl vsphere login --server=[SupervisorControlPlane] --tanzu-kubernetes-cluster-name [tkg cluster name] --tanzu-kubernetes-cluster-namespace [Supervisor Namespace]

Now you only need to change your context to start deploying resources on your cluster!

Summary

In this post we gathered the appropriate information to build a desired state configuration file for our TKG guest clusters. We deployed the cluster and connected to it through the Kubectl cli and can now provision workloads.

Stay tuned for future posts where we discuss how this cluster can be updated, upgrade, modified, and destroyed.