While I was at VMworld this year in San Francisco, there was a lot of buzz about this company called PernixData. Maybe the buzz was just from some of the superstars that built this company such as Co-Founders Satyam Vaghani (better known as the father of VMFS) and Poojan Kumar (also co-founder of Exadata).

Given the smart minds that have been around the company since the start, I thought I better stop by their booth and at least say “hi”.

The main goal of PernixData’s main product FVP is to allow storage performance to be increased without the need for adding additional capacity.

What is PernixData FVP?

PernixData FVP allows customers to add local SSD or flash drives to a server to be used as a local cache. The main premise is that any time a virtual machine needs to read from disk, it has to traverse the storage fabric and access a SANNAS. The SAN usually isn’t full of SSD drives due to price, so it has a variety of slower speed spinning disks.

This is a basic version of what happens to a normal virtual machine doing a disk read. Virtual machine utilizes the hypervisor to then access a shared disk device.

PernixData utilizes a spare SSD drive on the hypervisor to be used as a read cache. Imagine how much better the disk reads could be if the data was all stored locally.

PernixData uses that data stream and caches this data locally on the SSD Drive. This process could actually slow down the storage performance in a few specific situations. Frank Denneman describes this process and the “False Writes” on his blog.

Once this data has been “warmed up” (caching enough data on the local drive to be usable) the future reads can be done directly from the local Solid State Drive instead of traveling all the way to the storage array. These reads should be much faster since there is a shorter path and a higher speed disk. It also has the added benefit of taking load off the storage array. Less reads on the array means more time for the array to handle other requests.

PernixData Installation

PernixData Server install

The installation requires a virtual machine to handle the management of the flash caching. This can be done on a standard windows server. The software install is a pretty straight forward process resulting in a next .. next .. finish type wizard which will ask for the vCenter config info during the process. My screenshots are below.

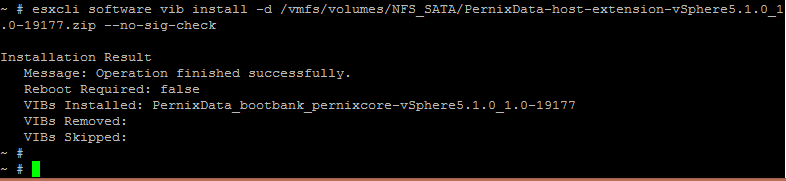

Host VIBS

Each ESXi host will also need to add a new vib so that you can claim an SSD drive for use by PernixData FVP to do the caching. In order to do this you need to SSH into the host while it’s in “Maintenance Mode”. Once SSH’d into the server you can run the below command substituting the location of the PernixData vib.

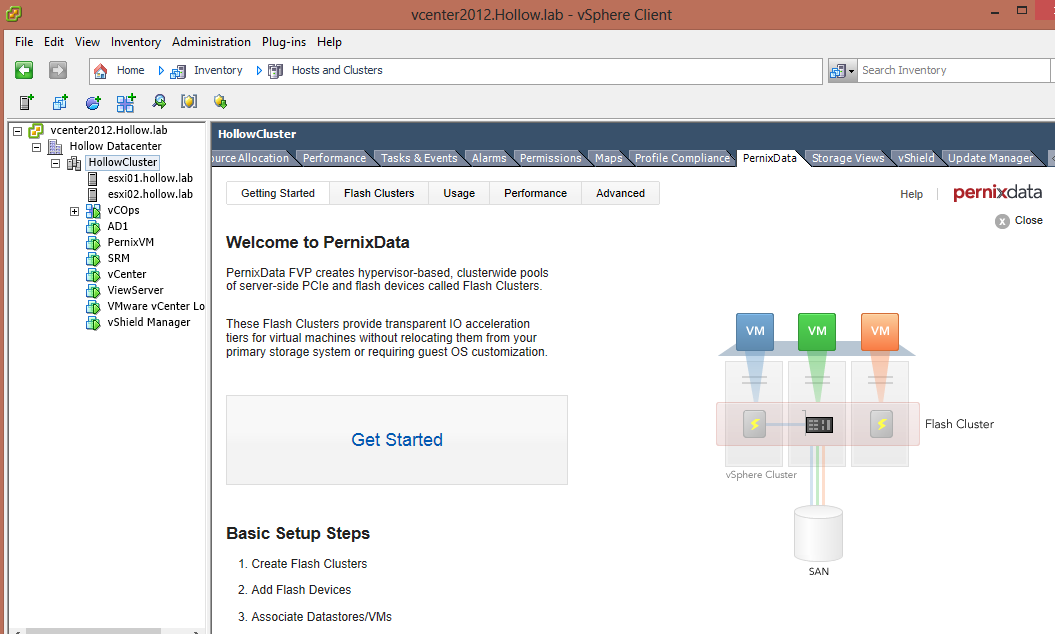

Configuration

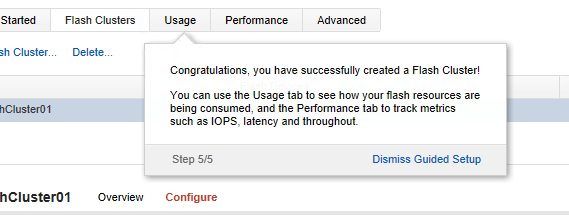

Configuration of the PernixData Cluster was a breeze. There is a guided setup on the vCenter server to get you started. Click on the Cluster and then the PernixData tab. Click on the “Get started” button to learn how to use it.

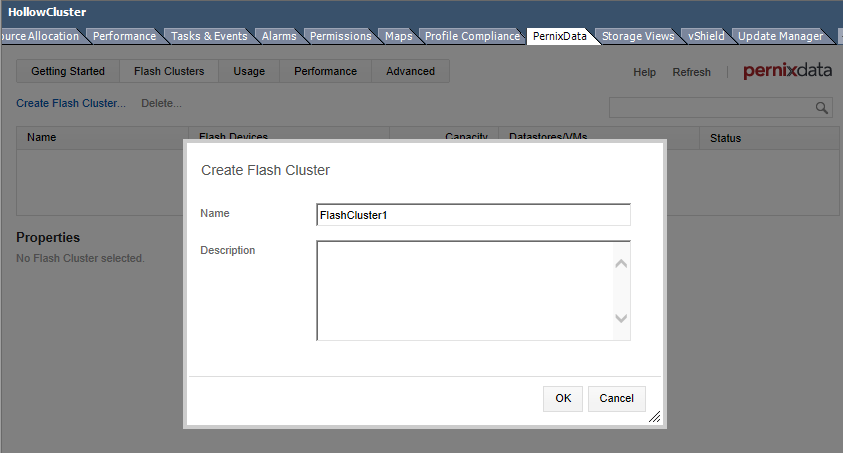

Create a PernixData Cluster. (this consists of a name)

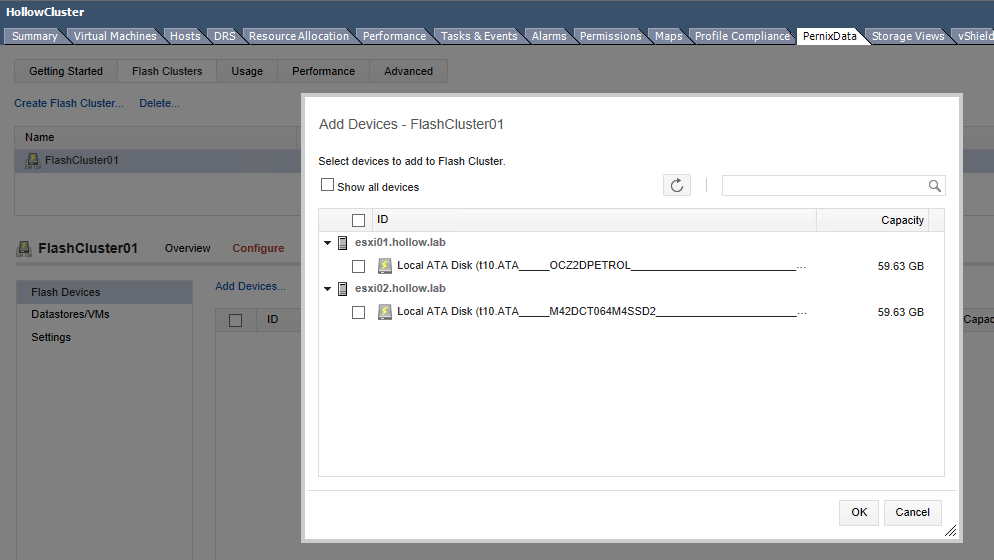

Select the Local SSD’s that will be used for the caching.

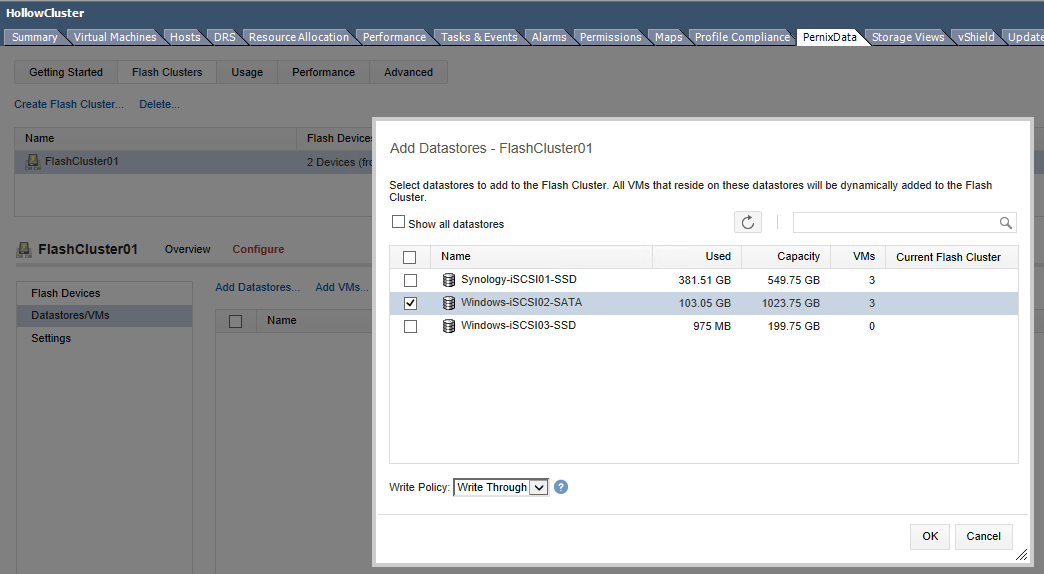

Select the datastores that you want to be accelerated. NOTE: PernixData is VM aware, meaning that you can actually accelerate a specific VM without doing an entire datastore if you’d prefer.

That’s it! Reap the rewards of local cache!

You can look at the usage and performance tabs to see how your IO is doing and how much of your data is being read from cache and how fast your data is being evicted for new data to be cached.

Write Back vs Write Through

PernixData has two types of caching. Write Back and Write through.

Write Through will take any writes from a virtual machine and write them to the shared storage device. During this process the data is also written to the local flash device by PernixData FVP. The diagram below should show what a Write through policy looks like (it’s the same graphic from above)

Write Back on the other hand will write directly to the local flash device and then in the background commit those writes from the flash device to the shared storage. This method allows for lower latency but also introduces a risk where a system fails after data is written to the local flash device, but before it could be committed to the shared storage. In order to combat this, FVP gives you the ability to use replicas which then write the data to a sibling host’s SSD device in case of failure.

Performance

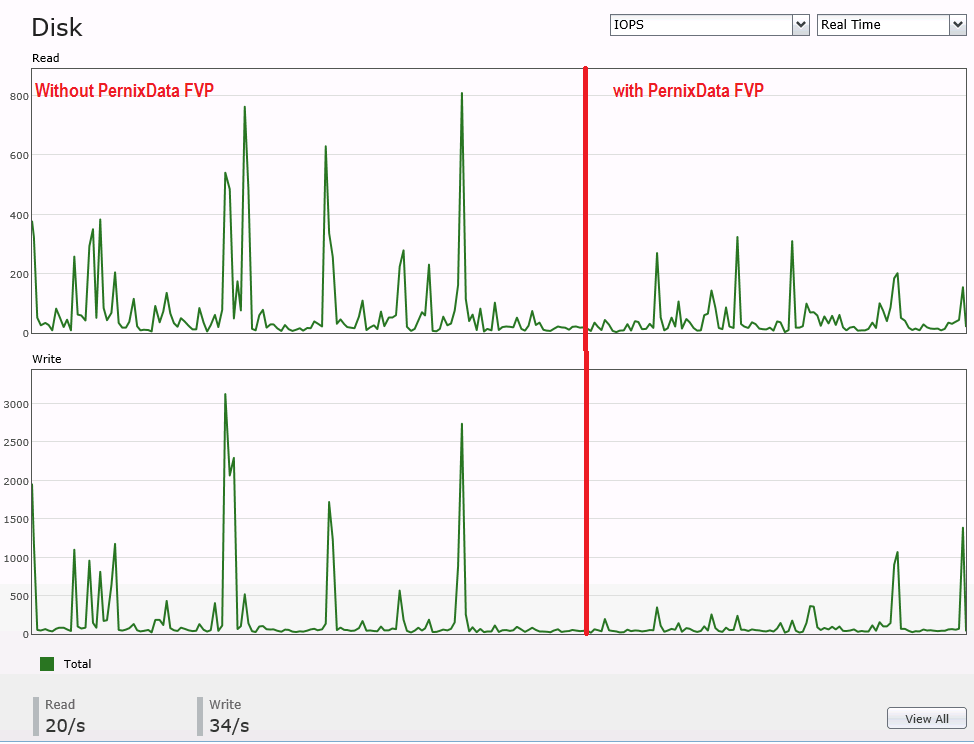

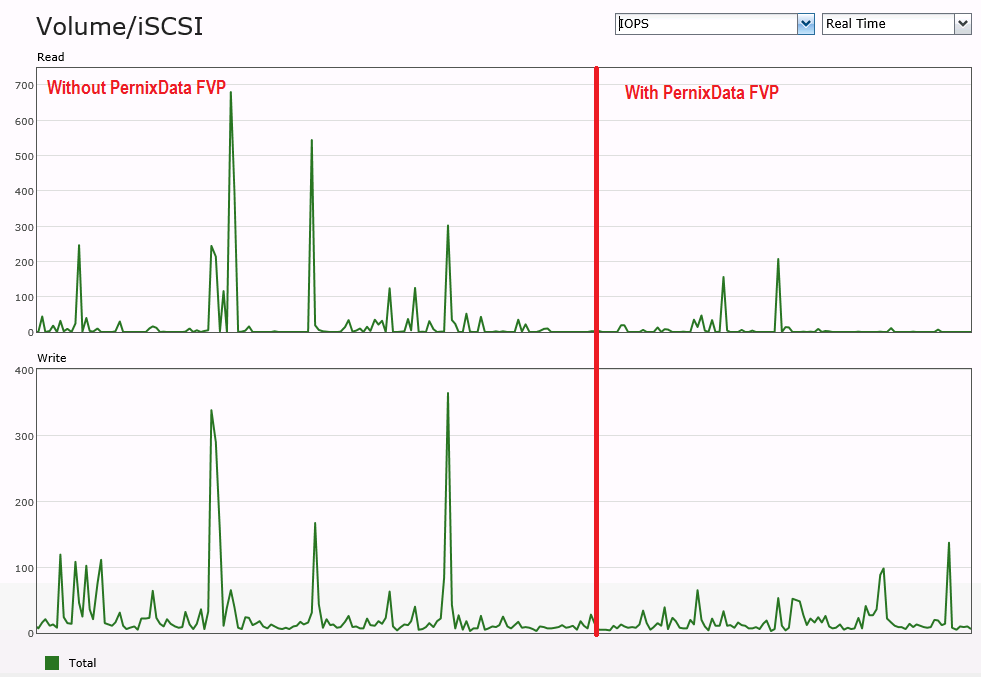

I ran some performance tests on my home lab with the free 60 day trial. My home lab uses a Synology DS411+ slim and I have 2 hosts each with a local 60GB SSD for the flash cache.

I didn’t want to use IOmeter to test the performance because it’s not a real world test. Running IOmeter 1 time wouldn’t cache any data and would cause the “False Writes” issue and not show any performance gain. Instead I used a free trial of LoginVSI which simulates a number of Terminal Server sessions, complete with copies, pastes, web browser sessions and some Outlook and Excel procedures. This was the closest thing I could find to a real world testing scenario.

Below are two screenshots of the performance of my Synology. The first half of the graphs show the activity during a LoginVSI (10 RDP Sessions) and PernixData FVP off. The right side of the graph shows a new LoginVSI test with PernixData FVP on.

To be fair, the performance doesn’t look that much better, but I think in a real life scenario maybe doing database reads, you would see much better performance.

You can see from the PernixData tab in vCenter that reads are being cached from local storage and in my tests, none of the data is being evicted. Again, a longer real world test should show more accurate information. The fact that there are no evictions during this test shows that I don’t have a large enough testing footprint to show what would happen in real life.

Overall

I was impressed with PernixData FVP and how easy it was to get setup and configured. You can turn the caching on and off very simply and set it by VM, datastore, or both and have a few options about replicas. If you’re storage array has additional capacity but you’re not getting the performance you were hoping from it, consider trying out PernixData FVP and see if this relieves your pain points.