Sometimes things don’t go quite as we’ve planned. When that happens in a computer system, we turn to the logs to tell us what went wrong, and to give us some clues on either how to fix the issue, or where to look for the next clue.This post focuses on where to look for issues in your Kubernetes deployment.

Before we dive into the logs, we must acknowledge that there are different ways to install a kubernetes cluster. The pieces and parts can be deployed as system services or containers, and the way to obtain their logs will change. This post uses a previous post about a k8s install as an example of where to find those logs.

Journal Logs

Some of the Kubernetes components will likely be installed as Linux systemd services. Any components deployed as systemd services can be found in the Linux journal on the host in which the service resides. This could include etcd logs, kubelet logs, or really any of the components running as a service. We’ll look at one component that should run as systemd below, the Kubelet.

Kubelet Logs

The kubelet runs on every node in the cluster. Its used to make sure the containers on that node are healthy and running, it also runs our static pods, such as our API server. The kubelet logs are often reviewed to make sure that the cluster nodes are healthy. The kubelet is often the first place I look for issues when the answer isn’t obvious to me. Since the Kubelet runs as a systemd services we can access those logs by using the journalctl commands on the linux host.

journalctl -xeu kubelet

Container Logs

The rest of this post assumes that the other components are running as containers. Container logs will be accessed differently and you must know the container you’re looking for so we’ll explain those below.

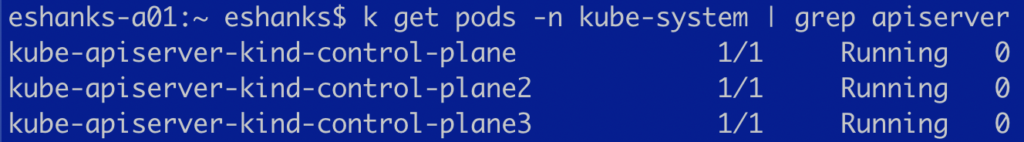

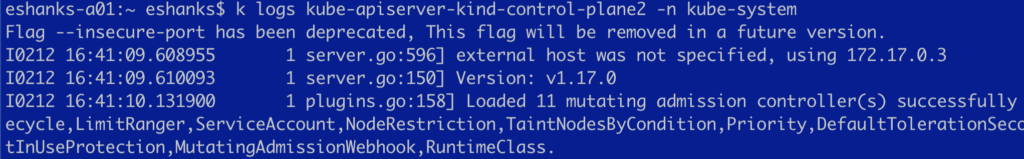

Before we discuss that, its important to realize that the container logs may be accessed in different ways depending on the situation. For example if the Kubernetes cluster is healthy enough to run kubectl commands, we’ll use the commands:

kubectl logs <pod name goes here>

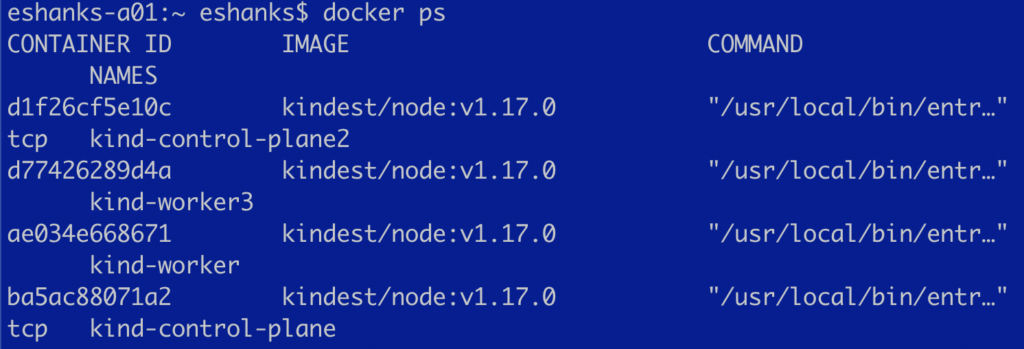

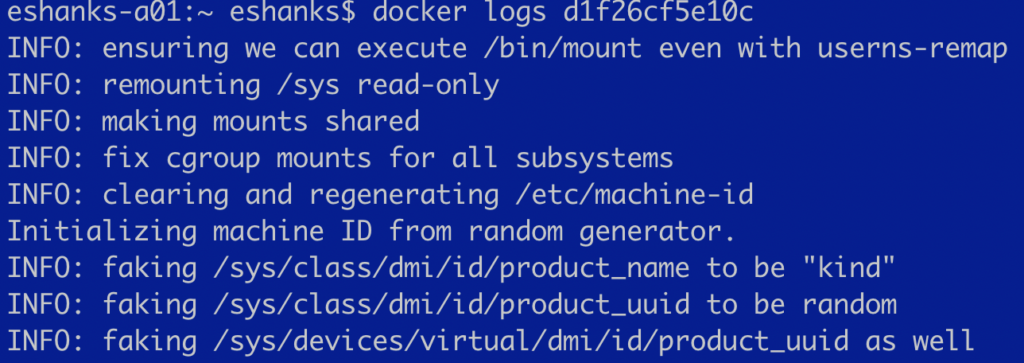

But what if the Kubernetes cluster is so unhealthy that the kubectl commands don’t work? You won’t be able to run the commands to read the logs even.

If this happens, the next step is to get the logs through your container runtime. For example, if our API server container is running, but the K8s cluster isn’t, you must run the cri commands (Docker in this example) such as:

docker logs <container id goes here>

You should now have the commands you need to get logs from the other Kubernetes components that are running in your cluster as containers. The list below will explain what types of issues you might have and which containers you might want to check to solve them.

- API Server Logs - The API server is the brains of the whole k8s operation. This is a good place to start if you’re having issues with anything in the cluster. These logs can be helpful to ensure that the API server can write to etcd correctly, or if there are authentication issues as a couple of examples.

- Kube Scheduler Logs - WHY AREN’T MY PODS STARTING!? The kube scheduler is responsible for placement of pods on the nodes. If you’re troubleshooting zones, or pods not starting up, check this container.

- Controller Manager Logs - The controller manager has a multitude of services its managing, but these include replica controllers and cloud providers. I’ve used these logs to troubleshoot issues with my vSPhere cloud provider connections.

- etcd Logs - The stateful storage for Kubernetes. The API Server is the only thing writing to this key/value store (or it should be!). If the etcd database isn’t happy, the cluster isn’t going to be very useful. Check this for information about etcd quorum and certificates when the API server is connecting to the store.

Summary

Hopefully you’ll never need to look up any Kubernetes logs because it just runs. My experience has told me that you’ll need to look up logs at some point in your k8s journey. Remember that any logs for the systemd services such as the Kubelet are found in the Journal. Other logs are written to stdout and those logs can be accessed by using either the kubectl logs commands or the container runtime logs commands. In an enterprise environment tools such as FluentD are often used to aggregate these logs and centrally store these for easier review. Happy log hunting!