The use cases here are open for debate, but you can setup a serverless call to vRealize Orchestrator to execute your custom orchestration tasks. Maybe you’re integrating this with an Amazon IoT button, or you want voice deployments with Amazon Echo, or maybe you’re just trying to provide access to your workflows based on a CloudWatch event in Amazon. In any case, it is possible to setup an Amazon Lambda call to execute a vRO workflow. In this post, we’ll actually build a Lambda function that executes a vRO workflow that deploys a CentOS virtual machine in vRealize Automation, but the workflow could really be anything you want.

If you’re not familiar with AWS Lambda, it is a service that allows you to execute Python, Node.js, and Java functions without having to have a server to run them on, or “serverless”. The functions can be used for about anything you’d like to use them for.

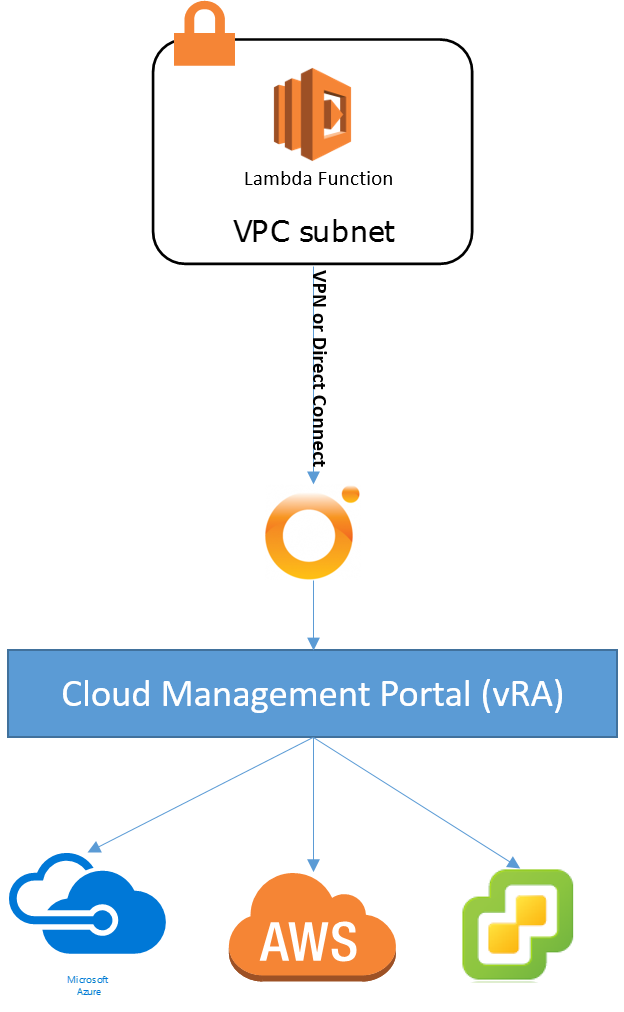

Architecture

To get started, lets take a look at the architecture involved here. A lambda function is built in Amazon Web Services within a VPC. By default, lambda functions are executed against publicly accessible objects unless you place them within a VPC where it can access your own resources. In our case, the lambda function is created in a VPC that has a VPN connection to my on-premises vRealize Orchestrator Instance. The vRO workflow that I’m calling requests a vRealize Automation deployment of a CentOS virtual machine. From there I could deploy to a variety of clouds, or the workflow could be doing something else like adding an Active Directory User or creating a snapshot on a virtual machine. The world is your oyster.

Build Lambda Function

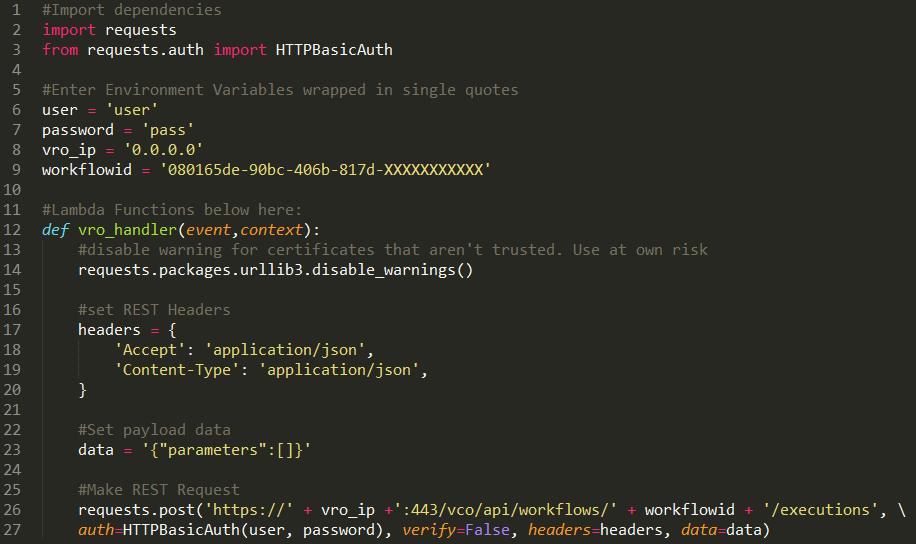

Before you create the function call, go to Github to download the lambda function that you’ll update. You’ll find a zip file in the repo that will have a python script it it called “vro-Lambda.py”. Open up this file to make changes to your environment variables.

The code is shown below, and you’ll need to enter in your own environment variables to call whatever vRO workflow you’re trying to execute. Notice that you’ll need to update the username and password used to login to the vRO instance, the vRO IP Address or DNS name if available in AWS, and also the workflow GUID that should be executed. Depending on your workflow, you can also update the payload data to provide additional inputs, but the workflow I’m executing doesn’t require any inputs. When you’re done modifying the file, make sure you create a new ZIP file and name it “lambda-vRO.zip” just as it was before you open the compressed file.

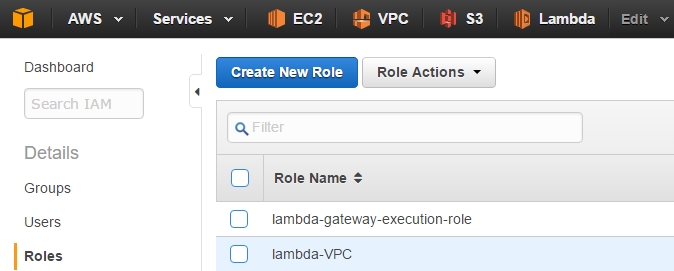

Create a Lambda Role

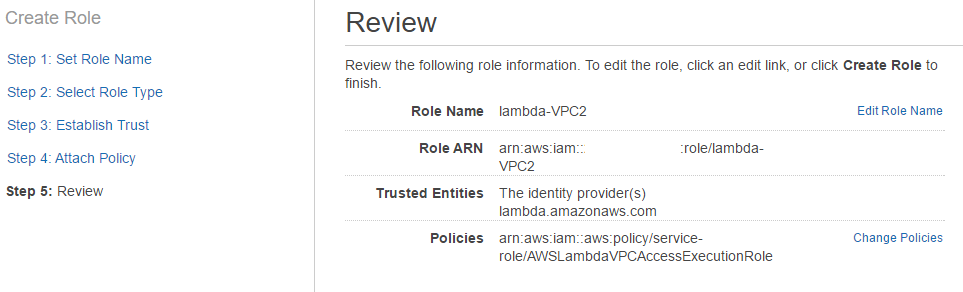

Before we can execute a lambda call within a VPC, we need to create a role that will give the function enough permissions to execute. To do this, go to the IAM section of your AWS instance and create a new role.

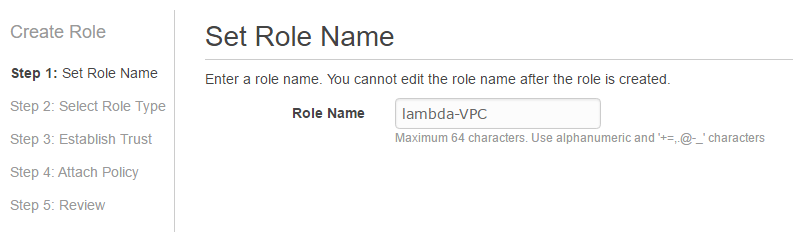

When the create new role wizard opens, give the role a name. I’ve named my role “lambda-VPC”.

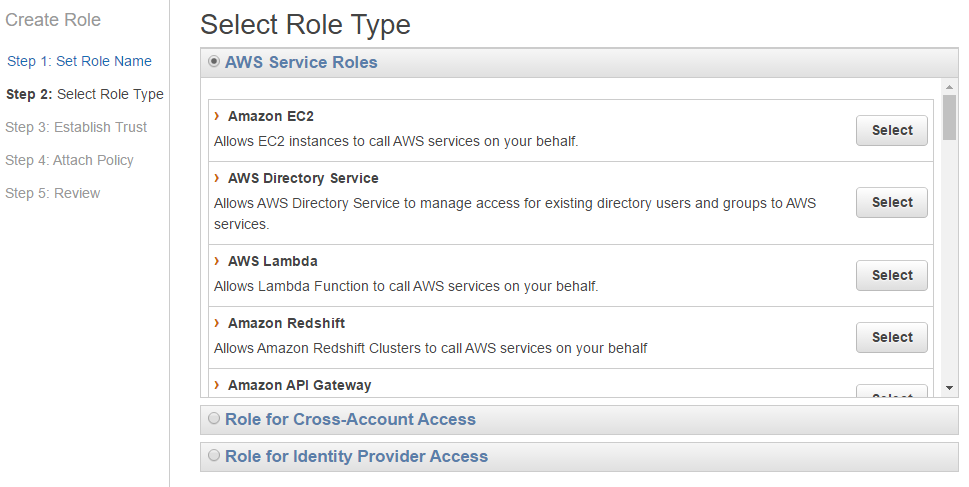

Next, select the role type. Here we’re looking for the “AWS Lambda” role type.

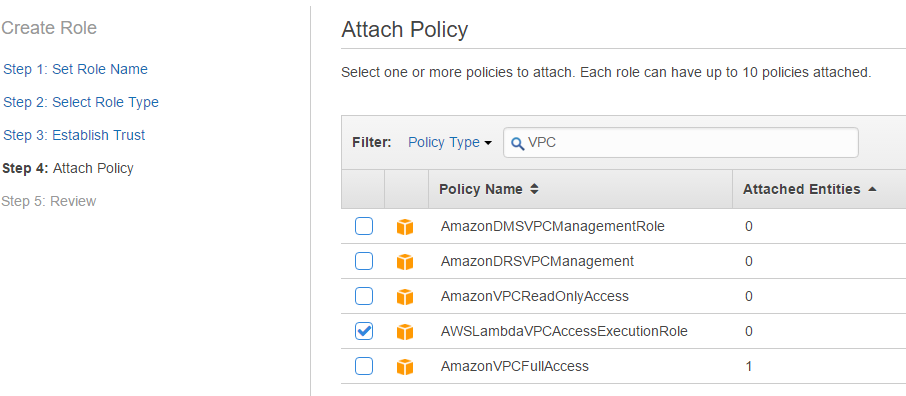

Next, select the policy that has the correct permissions. In this case we’re looking for the “AWSLambdaVPCAccessExecutionRole” policy.

On the Review screen, check it to make sure it looks correct and then create the role.

Deploy the Lambda Code

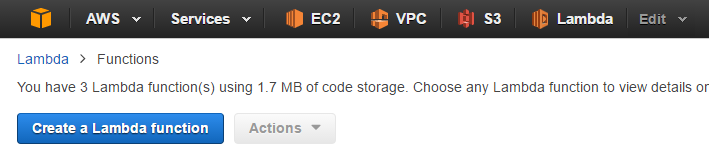

Now we create the lambda function that will enable us to execute the vRealize Orchestrator Workflow. To get started access the lambda section of the AWS portal and click “Create a Lambda function” button.

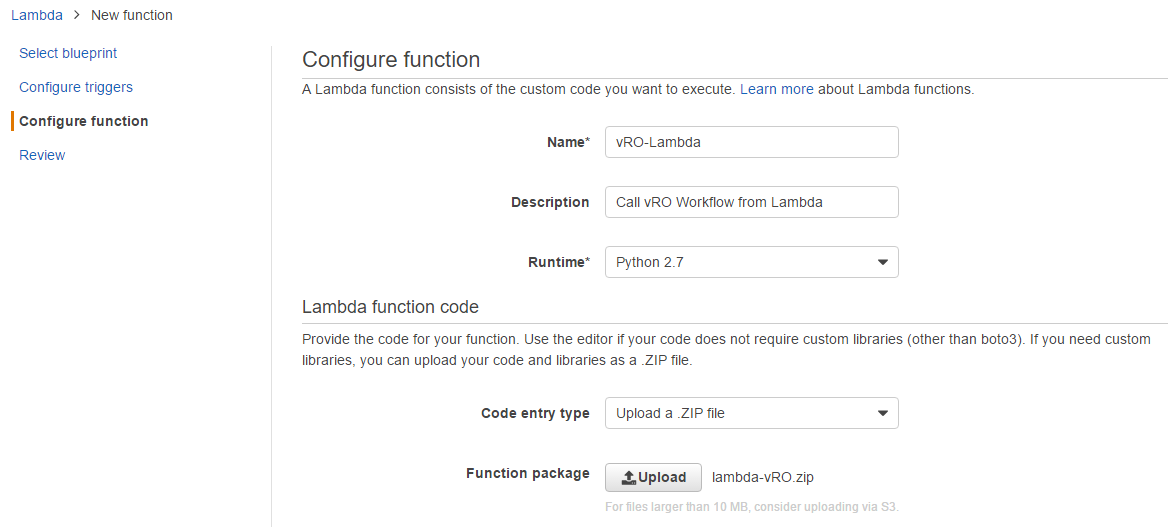

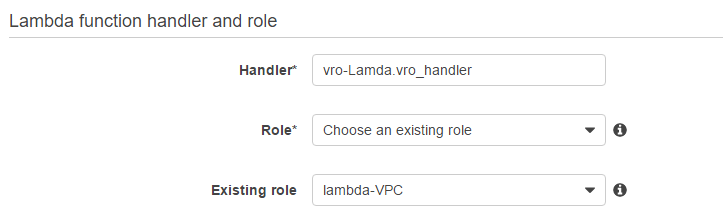

On the next few screens you’ll see blueprints and other things that are able to quickly get you started. We don’t need any of that stuff so you can click next until you get to the “Configure function” section. Here you’ll start the real work. Give the function a name and a description and select “Python 2.7” as the Runtime. Under the Lambda function code section leave the “Code entry type” field as “Upload a .ZIP file”. After this, you’ll need to upload a ZIP file with all of our request information in it and the function call. This is the lambda-vRO.zip file that we created in a previous section of this post.

Further down the page we’ll have to enter the handler information. The handler should be the zip file name of the function and then the handler name separated by a period. So for us it should be vro-Lambda.vro_handler. For the “Role” field leave it at “Choose an existing role” and then the existing role name should be the name of the role we created in the above section.

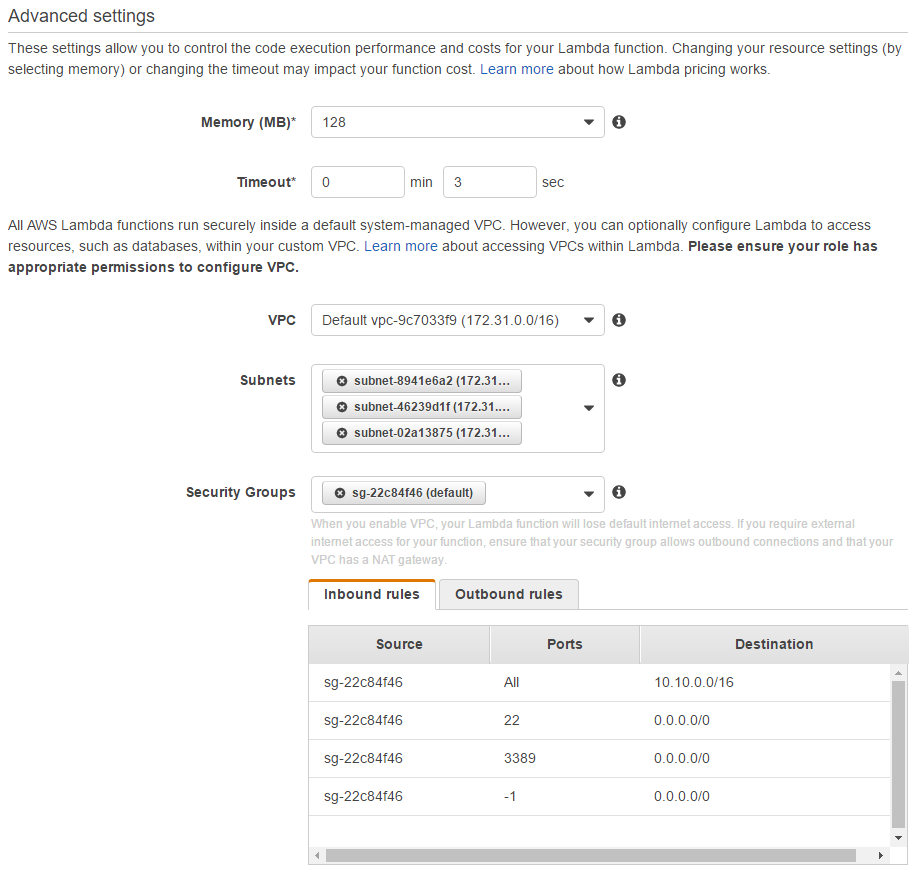

Under the advanced settings section, we can leave the Memory (MB) fields and the timeout fields at their defaults. But in the VPC sections we need to select which VPC the lambda code will run in, and the subnets that its able to run within. For high availability purposes two subnets should be selected. Under security groups, select a security group with access to your vRealize Orchestrator instance. As you can see in my inbound rules I’m allowing access to this subnet from my 10.10.0.0/16 network which is my home lab over the VPN, my outbound rules are just as open as the inbound rules. Your mileage may vary here.

Test your code.

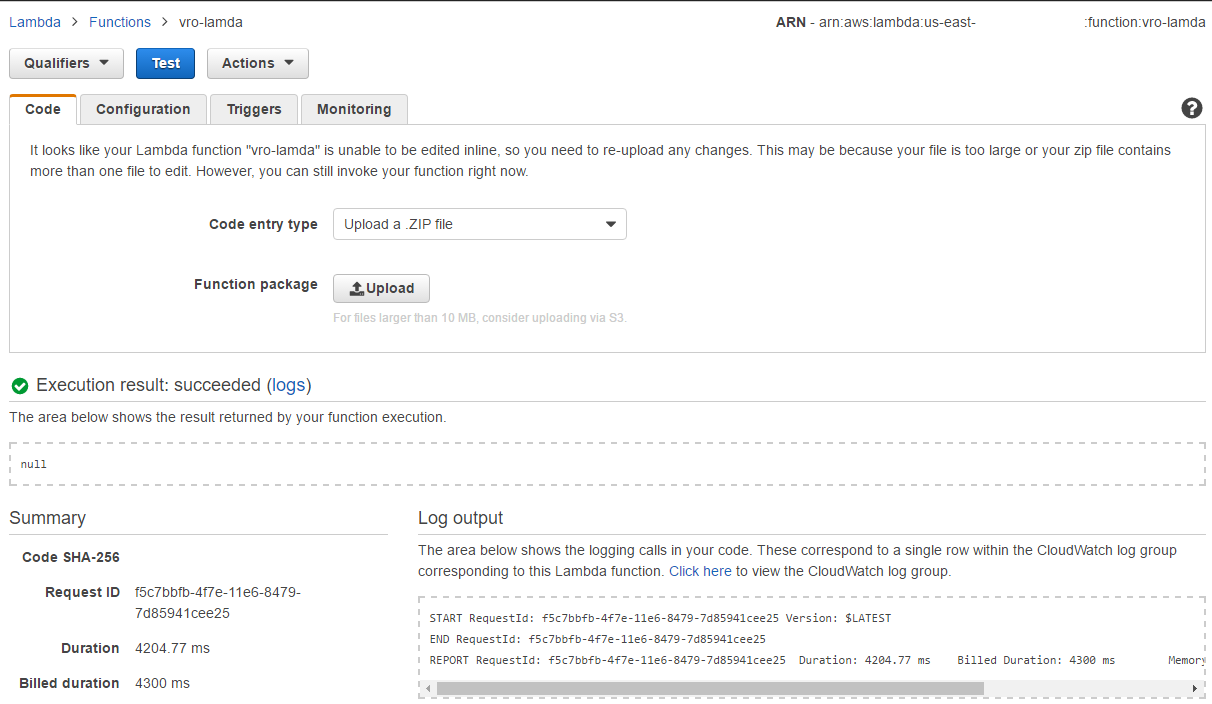

Now in the Input test event editor of the lambda function, I’ve left the values as {} because I’m not providing any inputs.

When you run the test, you’ll see a return value of “null” and some info about the request.

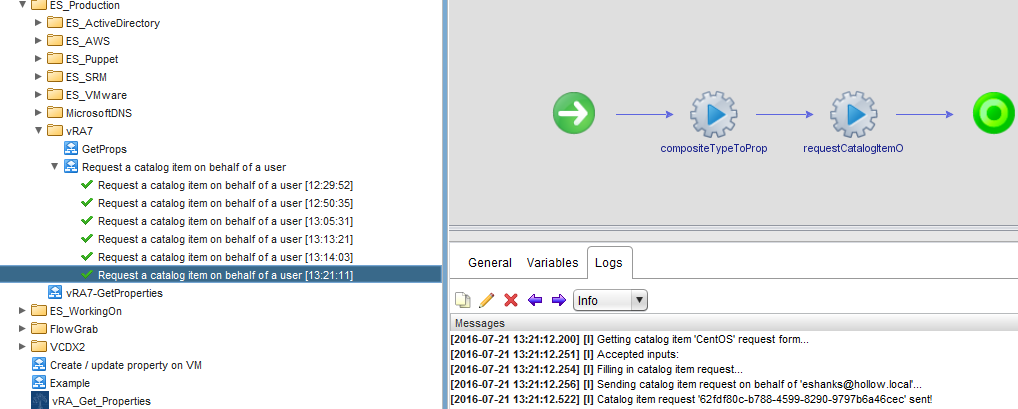

If you look in your vRO instance, you’ll see a token object of the request that has been executed.

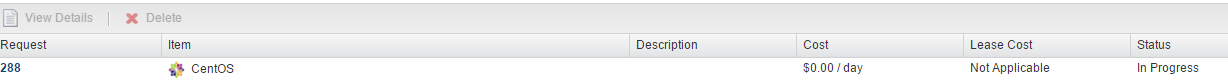

In my vRA instance I can see a CentOS machine being provisioned.

Summary

This post should give you an understanding on how Lambda functions are setup within a VPC. I’ve chosen to write a script to call a vRO workflow, but you could use Lambda for just about anything you wanted a script for. I’d love to hear how you’re using Lambda and if you’ve got a specific use case for calling your vRO workflows from the AWS portal. Happy Coding.