HP Virtual Connect is a great way to handle network setup for an HP Blade Chassis. When I first started with Virtual Connect it was very confusing for me to understand where everything was, and how the blades connected to the interconnect bays. This really is fairly simple, but might be confusing to anyone that’s new to this technology. Hopefully this post will give newcomers the tools they need to get started.

Downlinks

The HP interconnect modules have downlink and uplink ports. The uplink ports are pretty obvious, as they have a port on them that can be connected to a switch or another device. The downlink ports are less obvious. The downlinks exist between the interconnects and the blade bays. For example, in a c7000 chassis there are 16 server bays so an HP Flex-10 interconnect would have 16 downlink ports, one for each blade.

In the picture below of an HP VC Flex-10 Enet Module, there are 8 uplink ports, which are visible, as well as 16 downlink ports which are not visible, for a total of 24 ports.

Blade Mapping

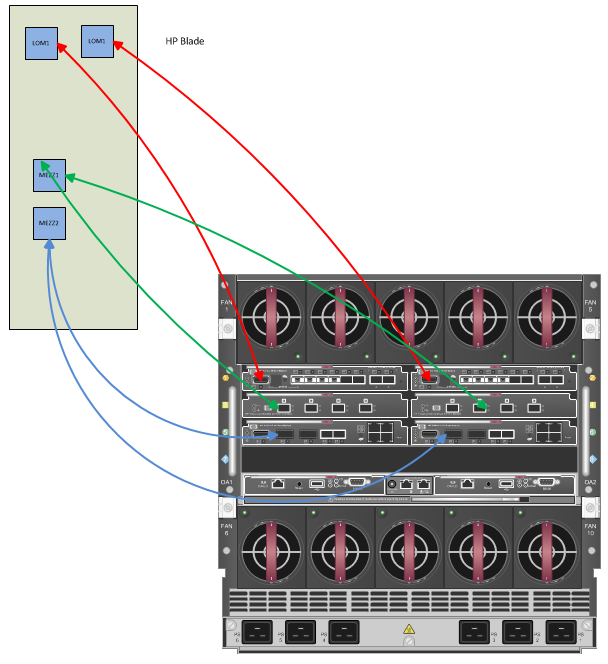

Now that we’ve seen that each blade has connections to the interconnect via the downlink ports, lets take a closer look at how we see what NICs are mapped to which interconnect bay. HP Blades have two Lan On Motherboard (LOM) ports as well as room for two mezzanine cards. The mezzanine cards can contain a variety of different types of PCI devices, but in many cases they are populated with either NICS or HBAs.

The LOMs and Mezz Cards map in a specific order to the interconnect bays.

LOM1 - Interconnect Bay 1

Lom2 - Interconnect Bay 2

Mezz1 - Interconnect Bay 3 (and 4 if it’s a dual port card)

Mezz2 - Interconnect Bay 5 (and 6 if it’s a dual port card, 7 and 8 if it’s a quad port card)

The picture below should help to understand how the HP Blades map to the interconnect bays. This example uses dual port mezzanine cards.

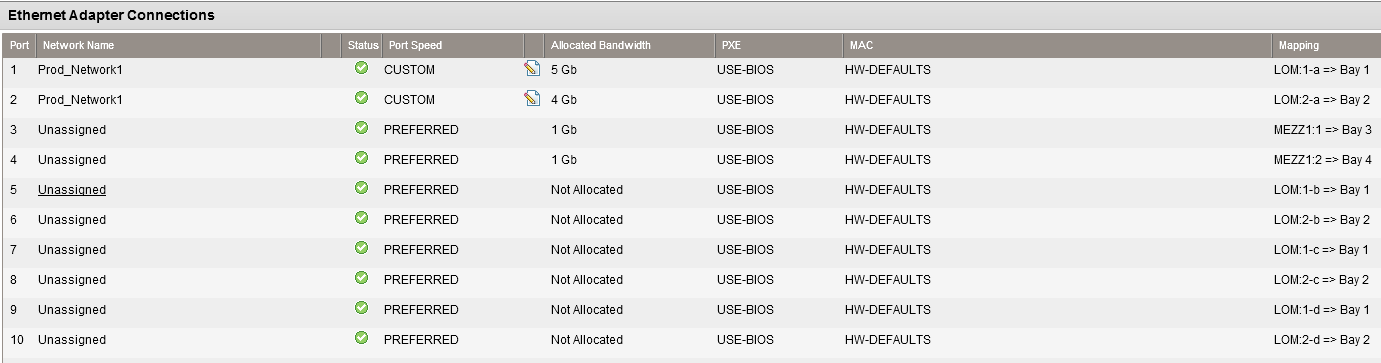

LOM Ports with Flex-10

An additional thing can happen if you’ve got LOM FlexNICs as well as a Flex-10 Ethernet Module or Flex Fabric interconnect module. You can subdivide the LOM NICs into 4 Logical NICs. From here, your hypervisor or operating system will see 8 NICs instead of the original 2 NICs that would normally be there. This is an especially nice feature if you’re running virtualization, as you should now have plenty of network cards for vMotion, Fault Tolerance, Production Networks, and management networks.

As you can see from the following screenshot, the LOM NICs will be seperated into 4 Logical NICs labled 1-a, 1-b … 2-d.

I should also mention that if the interconnect modules are Flex Fabric, the LOM-1b and LOM-2b could be either an HBA or a NIC, your choice.

I know that these concepts seem fairly straight forward now, but to a beginner this is some very useful information to get started with HP Virtual Connect. I hope to have some more blog posts in the future about configuring networking with Virtual Connect.